5-second summary:

- Most teams are using AI more, but leaders are struggling to understand and measure ROI.

- When AI speeds up individuals but not teams, companies pay the cost. Atlassian estimates this “fragmentation tax” costs the Fortune 500 $161B a year.

- The fix: treat AI as a team player, not a personal productivity hack, and bake it into everyday workflows so teams ship for quality, not just quantity.

There’s a paradox happening with AI: Usage and productivity are up, but bottom-line results aren’t always as obvious.

It’s a familiar pattern you might be seeing in your organization: Leadership invests in AI, and employees say they’re getting more done: more code, more campaigns, more analysis.

Yet team and business outcomes tell a different story. When leaders look at outcomes — customer impact, work quality, and cross‑team alignment — it’s hard to point to clear, company‑wide gains. In Atlassian’s State of Teams 2026 report, which surveyed 12,000+ knowledge workers and 170+ Fortune 1000 executives around the world, 89% of executives say AI has increased the speed of work, but only 6% feel confident they can point to specific organization-wide AI ROI.

This is the AI efficiency paradox: as AI helps individuals work faster, the surge of output backs up at reviews, approvals, and other human‑judgment gates, slowing down the flow and wiping out the speed gains. As one Vice President of Talent and Business Strategy at a Fortune 500 retail company told our researchers, “You think you’re moving faster, but you discover a quality gap and what we thought might be 20% faster gets pulled back.”

To get more return on AI investments, leaders have to understand why this is happening and how to fix it.

Faster output is creating bigger bottlenecks

To understand the paradox, it helps to look at where time really goes in modern knowledge work.

In most teams, only about 20% of the work involves individual production: writing code, drafting documents, creating designs, running analyses. The remaining ~80% is collaboration and workflows: reviews and approvals, alignment meetings and check-ins, decision-making and escalations, validation and testing, or waiting for partners to react.

AI tools are remarkably good at making the 20% of that equation go faster. A design spec that used to take a day now takes an hour. A feature that used to take two days of coding and testing comes together before lunch. Marketing teams can spin up dozens of campaign variants in a morning and finance teams generate more forecasts and scenarios in a week than they used to in a quarter.

But that extra output still flows through the same human gates as before: code and legal reviews, security checks, quarterly planning, and so on. And because those gates haven’t changed, two things happen:

- Work in progress piles up at the bottlenecks. Queues get longer for reviewers, approvers, and decision-makers.

- Human throughput at those gates drops. Cognitive overload, context switching, and fatigue slow reviews, or push people to rubber‑stamp. That creates slop and rework.

The result is a bit of smoke and mirrors. Activity output metrics (like messages, docs, and tasks) spike, while outcome metrics (like shipped value, incidents, and customer satisfaction) barely move or even get worse.

In other words, rather than speeding up a slow system, AI is often overloading the parts of the system that were already strained.

Why large enterprises bear the brunt of this paradox — and what it costs them

The AI paradox shows up in organizations of all sizes, but it hits large enterprises hardest.

Before AI, most big companies already had low execution costs (many people, defined processes, specialized roles, and mature tooling) and high coordination costs (complex stakeholder maps, risk and compliance controls, cross-functional dependencies, distributed teams, and formal governance).

In that environment, AI doesn’t just speed work; it amplifies complexity and decision load. Teams spin up more artifacts with fewer shared standards and reviewers face longer queues and harder calls. Small misalignments multiply across functions and tools, introducing outdated or conflicting information into institutional knowledge.

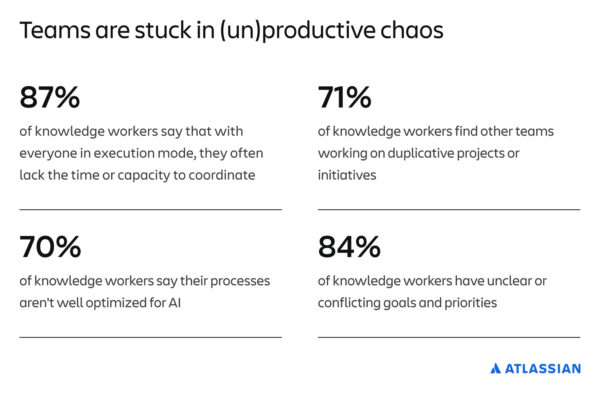

The net effect is slower, riskier outcomes at scale, or what Atlassian’s State of Teams 2026 report identifies as an “AI fragmentation tax.”

“Even teams that say AI is making them faster are still losing about six hours a person per week to coordination chaos — unclear goals, duplicated work, shifting priorities,” says Sara Gottlieb-Cohen, researcher on Atlassian’s Teamwork Lab. “Across the Fortune 500, that adds up to roughly $161B a year. The good news is that the teams who’ve figured out how to coordinate around AI have cut that tax nearly in half.”

How we define and measure the AI fragmentation tax

To calculate a team’s fragmentation tax, Atlassian researchers focused on survey respondents who said that AI is helping their teams move faster at work. We then looked at how often those respondents report classic coordination problems like unclear goals, shifting priorities, and duplicative work, and whether they say AI is creating more work or problems for their team. We combined those answers into a single score and put it on a 0–100 scale, where higher scores mean a higher fragmentation tax.

To quantify the fragmentation tax in dollars lost over time, we compared how much time high-tax and low-tax teams spend on coordination overhead — a gap of about 6.4 hours per person per week — and extrapolated that lost time across average Fortune 500 salaries and head counts, arriving at an estimated $161B wasted annually.

How to use AI to supercharge your teams instead of slowing them down

The fragmentation tax is widespread, but it isn’t inevitable. When Atlassian researchers examined what top-performing teams do differently, three coordination disciplines emerged, each involving meaningful changes to how teams plan, share knowledge, and move work across functions:

- Context: Can your teams (and their AI tools) access the same goals, decisions, and data, or is everyone working from a different version of the truth?

- Workflows: Does AI plug into how work actually moves across people and teams, or is it a collection of individual shortcuts that create more to review?

- Culture: Are people learning from each other’s AI experiments, or is everyone figuring it out alone?

Just 14% of teams practice all three of the above. Those that do cut their fragmentation tax nearly in half (46%) compared to teams practicing none, along with dramatically higher returns from AI across planning, collaboration, and team cohesion:

- 5.6x more likely to say AI helps them to better plan and prioritize work

- 9.4x more likely to say AI increases collaboration

- 13x more likely to feel connected to their teammates

Speed is easy to buy – Alignment isn’t

Most organizations are spending on AI as if production is the bottleneck. The data says otherwise: the constraint is coordination, and the cost of ignoring it is measurable. The organizations pulling ahead aren’t just deploying AI faster, they’re redesigning how their teams stay aligned while they move fast.

Want to operationalize these habits? Read the full State of Teams 2026 report for the data, frameworks, and concrete steps to embed these ways of working across your organization.