AI is spreading quickly through teams and organizations. But when asked for hard ROI figures to measure impact, leaders often scramble to gather time‑saved estimates and productivity anecdotes which only capture a narrow slice of the story.

Atlassian’s Teamwork Lab set out to answer a core question: How does AI create value for enterprises, and which metrics matter most at each stage of maturity?

The Enterprise AI ROI Value Framework

Clarifying how AI creates value across four stages of maturity

What we did:

- We mapped how AI value actually shows up in large organizations, from early experiments to net‑new employee-facing AI tools

- We synthesized that data and research into a four‑stage Enterprise AI ROI Value Framework

- We paired each stage with concrete ROI signals and suggested metrics to track

Why it’s useful:

- You stop “guessing” at AI ROI – Match your metrics to where you really are: Exploring, Optimizing, Enhancing, or Transforming

- You align leaders and teams on what “good” looks like, giving execs, AI leaders, and team managers a shared language and ladder for AI impact

- You make smarter AI investment decisions – Once everyone understands the next rung on the ladder, you can prioritize the AI bets that will help you climb it the fastest.

“Our number-one problem right now is metrics. When it comes to AI, our board wants to understand what’s happening when the rubber meets the road.” – Chief AI Officer overseeing 1000+ engineers

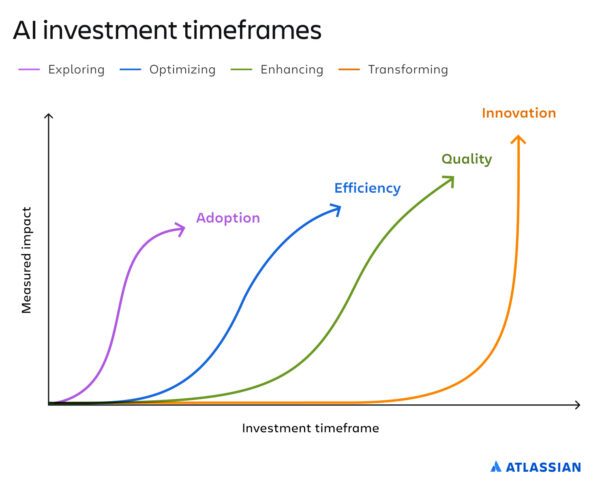

Classic ROI math assumes a clean, linear cause and effect. But AI value doesn’t arrive in one big bang. As an organization’s AI use matures, adoption usually flows from individual → team → organization‑wide:

- Individuals experiment and find promising use cases.

- Teams standardize those use cases into shared workflows.

- Organizations invest in platforms, governance, and structure to scale what works.

ROI compounds as AI usage moves from the individual to the collective. Lone superusers can generate personal gains, but the real enterprise impact happens when teams and organizations align around AI-first ways of working together.

Atlassian’s Enterprise AI ROI Value Framework defines four AI maturity stages and the primary outcome to measure at each:

- Exploring

- Looks like: Employees and teams experimenting with AI and running pilots

- At this stage, focus your measurements around adoption

- Looks like: Employees and teams experimenting with AI and running pilots

- Optimizing

- Looks like: AI is being embedded into everyday workflows

- At this stage, focus your measurements around efficiency

- Looks like: AI is being embedded into everyday workflows

- Enhancing

- Looks like: AI is improving accuracy, consistency, and customer outcomes

- At this stage, focus your measurements around quality

- Looks like: AI is improving accuracy, consistency, and customer outcomes

- Transforming

- Looks like: AI is enabling net‑new products, services, and business models

- At this stage, focus your measurements around innovation

- Looks like: AI is enabling net‑new products, services, and business models

Here’s how to pinpoint where your organization is on the maturity ladder and accelerate your climb.

Exploring: Measure adoption and build a foundation

Most organizations are in this category.

This is where teams are experimenting with AI tools and piloting new use cases. It’s a critical investment phase. You’re seeding skills, building comfort, and spotting where AI can have the most impact. It can also be frustrating, because this stage involves a lot of “failing forward”: uptake can be uneven across teams, and many pilots can feel like “two steps forward, one step back.”

Some leaders are tempted to dismiss this exploring as “AI tourism” or a distraction from core work. But when you’re exploring, your goal isn’t to prove AI has already paid for itself in dollars. It’s to answer a more basic question: Are people using AI enough, and in the right ways, to learn anything useful?

Exploration is not waste. It’s a prerequisite to unlocking possibility. Organizations that under‑invest here can’t move up the ladder to the efficiency, quality, or innovation gains to come.

ROI metrics to track in the Exploring phase

- % of employees experimenting with AI in their work (tracking daily, weekly, and monthly active users of AI tools across levels and functions)

- Participation in AI events and training: AI labs, hackathons, innovation days, workshops

- Number and breadth of pilots: how many experiments you’re running, and how many teams are involved

- Investment in exploration, such as:

- Time spent in AI education or experimentation

- Dedicated AI champions or teams

- Budget set aside for trying and evaluating tools

Optimizing: Measure and increase efficiency

You know you’re in an optimizing phase when teams across your org have created and refined a collection of high‑value AI-first workflows. The key difference from exploring is that AI is now tied to delivering faster outcomes, not just experimentation or play.

At this stage, your measurement focus should shift to operational efficiency. Workflows should feel faster, with improved visibility and collaboration. Gains should show up across teams and functions.

In this phase, you’ll start to see:

- AI embedded into existing workflows: coding, writing, customer support, reporting, research, QA, and more

- Individuals using AI to draft, summarize, translate, or troubleshoot, shaving minutes or hours off recurring tasks

- Early playbooks or “how we use AI here” guides shared within teams

AI ROI metrics to track in the Optimizing phase

- Time saved per task or workflow vs. a pre‑AI baseline

- Cycle time per workflow, before and after AI integration

- Throughput (e.g., tickets resolved, content shipped, tests run) with and without AI

- Automation rate: % of steps or tasks handled by AI

- Cost avoidance or reduction, including:

- Less reliance on contractors or vendors

- Fewer manual hours on repetitive, low‑value tasks

These numbers make AI ROI tangible for stakeholders and surface which use cases are worth standardizing and scaling.

This is where most organizations think their AI story ends: “We made people more productive. Check!” But in reality, optimizing is only halfway up the ladder. If you stop here, you get faster outputs, but you risk eroding standards as work slop creeps in: more noise, more errors, and more low‑quality outputs moving faster than your review systems can handle.

The next maturity rungs are about using AI to raise the bar on quality and innovation, not just to move faster, and they take longer to show impact.

Enhancing: Measure and improve quality

Once AI is a routine part of how work gets done, a new opportunity opens up. You’re not just working faster, you’re systematically improving quality, accuracy, and consistency. This is where AI starts to powerfully assist in review, governance, and decision‑making—not just in drafting or ideation.

Signals that you’re in the Enhancing phase:

- AI review for documents and code to catch issues before they reach customers

- AI‑assisted QA that surfaces regressions, edge cases, or anomalies humans might miss

- Brand and tone checks to keep content on‑voice and compliant across teams

- Automated reviews to check against SLAs and regulatory requirements

ROI metrics to track in the Enhancing phase

- Error, defect, and rework rates, before vs. after integrating AI into the workflow

- Compliance and standards adherence: fewer violations, more consistent application of policies

- Customer outcomes clearly linked to AI‑assisted workflows, such as:

- Improvements in CSAT or NPS

- Better first‑contact resolution or time‑to‑resolution

- Fewer incidents, escalations, or refund requests

“Once you’ve nailed adoption and efficiency, the constraint isn’t how fast people can ship with AI, it’s how much you can trust what they ship,” says Teamwork Lab researcher Ben Ostrowski. “To move into the enhancing quality phase, the key is to measure AI against the KPIs you already care about, then isolate where AI‑enabled changes are making a difference.”

Transforming: Measure innovation

Once your organization reaches the Transforming phase (the end goal, and a stage few companies have yet achieved) metrics become easier to track in a more traditional ROI frame. AI is no longer just a way to do the same work better; it’s fueling entirely new features, products, and offerings.

Here, AI is tightly woven into your business strategy. You’ll see:

- Net‑new products, services, or experiences that wouldn’t exist without AI

- New operating models or business lines built on AI capabilities

- Structural changes: central AI platforms, incubators, dedicated AI product teams, or new role archetypes

AI ROI metrics to track in the Transforming Phase

- Number of AI‑powered features, products, or offerings launched

- New IP created, such as patents, internal platforms, or proprietary models

- Net‑new revenue or margin attributable to AI initiatives

- Adoption and engagement with AI‑powered capabilities, compared to non‑AI alternatives

- Expansion into new markets or segments enabled by AI

By now, AI ROI starts to look like other large‑scale innovation bets. You can measure through real business outcomes, not just operational metrics.

Turn AI ROI into a ladder, not a guessing game

AI ROI isn’t a single number you unveil at a board meeting. It’s a ladder: adoption, efficiency, quality, then innovation.

Knowing your rung keeps expectations realistic: don’t force “new revenue” on early experiments, and don’t dismiss breakthrough work as mere productivity.

“Good leaders understand that truly transformational innovation takes time,” says Ostrowski. “But if you expect AI to generate new revenue while you’re still in the early exploration phase, or if you talk about it only in terms of productivity and speed, you’re selling its potential short.”

If you’re ready to put this framework to work:

- Find your current stage with the AI ROI metrics assessment:

Interactive assessment - Run the AI ROI Play with your leadership team to align on the right next metric:

Measure AI ROI Play - Share the AI ROI one‑pager with stakeholders who need the 5‑minute version:

AI ROI one-pager