Everyone is shipping AI products now, and most look impressive in demos but fail in the messy reality of daily work. The problem often isn’t the model; it’s the product built around it.

In this article, I’ll share what I’ve learned building AI products in environments where trust isn’t optional, and what it actually takes to earn it.

Imagine two AI tools analyzing a critical system outage, where a service goes down, and engineers need to know why. Both AI tools are factually accurate. One generates a dense wall of text with the diagnosis buried in the third paragraph. The other confirms which services are affected, surfaces a clear visual timeline, and presents the diagnosis with supporting evidence.

The model technically performed equally in both. But the engineer with the structured interface acts in seconds. The other spends ten minutes cross-referencing, still unsure. Same AI, different outcomes.

I’ve been leading design for AI products in Jira Service Management, now part of Atlassian’s Service Collection. These are systems teams rely on during outages, where time is short and wrong calls are costly. I’ve seen engineers trust wrong outputs and doubt correct ones. In both cases, the root cause was the same: the product didn’t give them the right signals to make a judgment.

To build AI products that works, we have to stop treating it as a feature and start treating it as a design material. Unlike traditional materials, it does not follow fixed rules, behave consistently, or fail predictably. Designing with AI means embracing uncertainty as part of the material itself.

AI is a material, not a feature

With conventional software, behavior is deterministic. You know what it will do, and you design around that certainty. AI does not offer the same stability. The same input can produce different outputs, and a system can be confidently wrong while looking identical to when it is right.

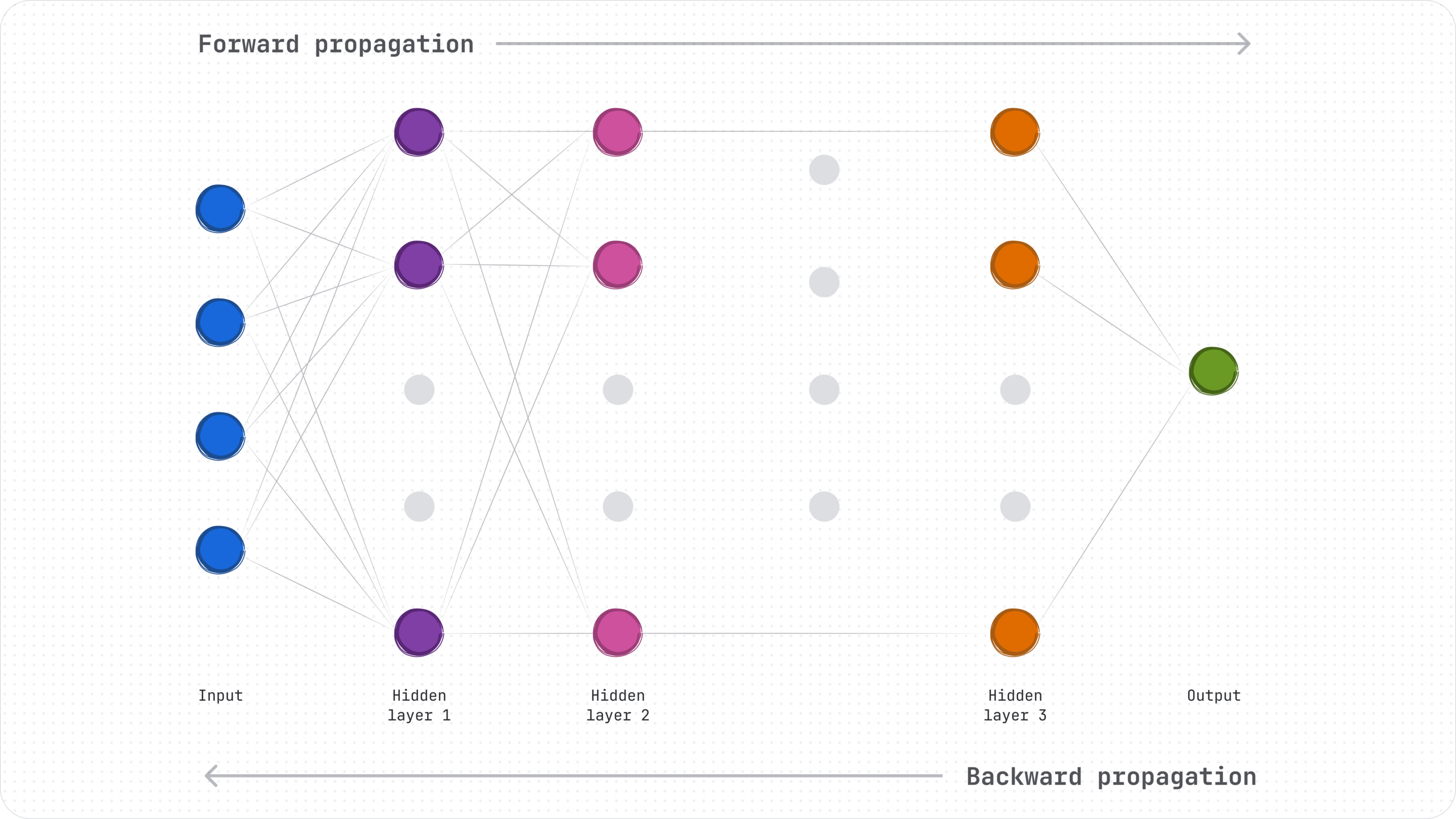

To understand why, it helps to look at how these systems actually work. Neural networks learn by spotting patterns, making predictions, and adjusting over time. Transformers, which power large language models, process whole passages at once, calculating how each part relates to the rest. This allows them to track context and remain coherent across longer interactions.

Large language models don’t reason or retrieve facts; they predict the next word. That means their outputs are shaped by probability, not truth. Rather than trying to eliminate uncertainty, we learned to design for it. Uncertainty isn’t a flaw. It’s an inherent property of AI systems.

Constraints like latency, cost, privacy, and context limits aren’t implementation details to hand off to engineering. They are properties of the material itself. Designers need to understand them in the same way they understand accessibility constraints or performance limits. They shape what kinds of experiences are possible.

The design levers that matter

If AI is a material, these are the levers that shape the experience:

- Inference latency – Response time defines the interaction. When replies are instant, it feels conversational, but when they aren’t, users need orientation. Progress states, staged outputs, and visible processing maintain trust.

- Model size versus performance – Smaller models reduce costs, improve privacy, and enable local execution, but may limit capability.

- Explainability and traceability – Black box answers erode trust in serious workflows. Surfacing rationale, sources, confidence signals, and limitations builds credibility.

- Robustness and edge handling – AI will encounter ambiguity and failure, but strong design can expose uncertainty, provide safe fallbacks, and avoid false precision.

AI behavior must be actively shaped, not simply wrapped in an interface. Once a model produces good results, most products default to shipping it in a chat box. It feels magical at first, but that default is also one of the most limiting. Then the questions get harder, outputs get longer, and users are left guessing what it can do or whether to trust it.

Designer Maggie Appleton calls this where “squish meets structure.” The model is flexible and generative, while the product is expected to behave like reliable software. When that tension is unresolved, the cognitive load shifts to the user.

Designing structure around this isn’t about constraining what AI can do. It’s about making it usable.

From black box to collaborator

What does structure look like in practice? For us, it meant shifting from delivering answers to designing collaboration. The goal was not to expose the model’s internals, but to make its behavior understandable and steerable.

Our first AI feature for incident analysis looked solid on paper. It took context and returned a hypothesis with supporting evidence. Clean. Fast. Confident. Yet, engineers were skeptical almost immediately. The issue wasn’t that it was frequently wrong; it was that they had no way of knowing when it might be. They had to dig through long audit logs to make sense of its results, with no way to challenge its assumptions or redirect it. It felt like a verdict, not a partner.

What they wanted was control. Visibility into inputs. The ability to course correct. Space for their own judgment. Good AI products don’t just generate outputs. They make reasoning transparent and keep humans in the loop.

The principles behind collaborative AI

That early engineering feedback reshaped the experience and informed our design principles.

Expose intent before output – Engineers were catching errors too late, after the system had already committed to a solution. We learned that showing a brief summary of what it planned to do made mistakes cheaper and easier to intercept.

Design for decomposition – Large blocks of output overwhelmed users. Breaking work into stages that mirror how engineers reason made the system easier to follow and issues easier to fix.

Surface uncertainty – Output that is polished to sound confident backfired when errors surfaced. Signals like “based on the last 6 hours” or “low confidence” invite scrutiny and help engineers catch mistakes quickly. Paradoxically, acknowledging uncertainty built trust.

Assume human participation – When we designed for full autonomy, engineers resisted. They wanted to co-work with the system, stay involved, and use their own judgment alongside AI.

When these principles come together, users stop negotiating with a black box and start collaborating with a tool.

Language is behavior design

Making AI collaboration work hinges on language. Because most AI tools are chat-based and text-heavy, content design plays a central role in making them trustworthy. It’s how we turn probabilistic outputs into clear, actionable guidance, especially when the pressure’s on.

Content designers and engineers collaborate on system prompts to ensure consistent, predictable outputs. Over time, they safeguard the AI’s voice, so the product feels coherent, rather than bolted together.

Upstream, content design shapes what the model does. Downstream, content design shapes how much work the user has to do.

| Upstream influence | Downstream influence |

|---|---|

| System prompts and roles | Output formatting and structure |

| Context framing | Progressive disclosure |

| Structured guidance | Confidence framing |

| Consistent language rules | Editing and correction tools |

Early on, I used to see prompts as configuration, owned by engineers and reviewed by content designers at the end. That model was quickly proven wrong. Treating prompt writing as design led to more consistent outputs, lower token usage, and easier scaling. We are still defining the right ownership model, but one thing is clear: system prompts are core design elements. In AI products, content design is behavior design through language.

Bounding creativity without killing it

Shaping behavior means knowing when variation is helpful and when it’s risky. In ideation, diverse AI outputs are valuable. But during a 2am outage or a compliance review, variation becomes a liability. The same model that’s generative in one context can be unpredictable and risky in another.

The key isn’t whether to constrain outputs, but when. AI involvement should scale with task sensitivity and tolerance for variance.

| Task type | Goal | Allowed variance | AI’s role | Example |

|---|---|---|---|---|

| Ideation | Generate options | High | Explore broadly | Marketing taglines, design concepts |

| Drafting | Produce a first version | Medium | Suggest structure and language | Reports, proposals |

| Extraction | Pull specific facts | Very low | Be precise | Incident timeline, affected services |

| Compliance | Enforce rules | Near zero | Check and flag | Policy violations, security checks |

| High-stakes response | Reduce risk | Minimal | Assist, don’t decide | Incident diagnosis, medical triage |

For designers and content teams, this means building clear boundaries: allow exploration where it’s safe, and apply controls where accuracy matters. At Atlassian, we make these boundaries explicit in the interface so users know when to expect creativity and when to expect reliability.

Measuring what actually matters

Deciding when to constrain outputs is only half the challenge. The other half is knowing whether those decisions are working.

Most teams measure what’s easy: latency, reliability, source alignment. Those metrics matter, but they don’t tell you if the product truly helps people get their work done. What I’ve found more useful are signals that are closer to the user experience:

- Output structure – A correct answer in the wrong structure is still a failure. Lists should look like lists. Summaries should surface conclusions first. When structure breaks down, workflows break down.

- Tone consistency – Inconsistent tone erodes familiarity, and familiarity is how trust compounds over time.

- Verbosity – An incident diagnosis at 2am should not read like a research paper. Output length is a design decision. It shouldn’t be left to default settings.

- Correction cost – In staged interactions, accuracy was not the clearest signal. Correction rate was. Frequent redirects meant the output looked plausible but was not usable.

Evaluation doesn’t just assess how a product performs; it shapes what the product is allowed to become. Designers who co-define AI evaluation criteria, rather than leaving them to Product & Engineering, have more influence over the product than they might think.

Dependability is designed, not assumed

Measurement shows whether a product works. Dependability asks whether people rely on it when it matters. Patterns vary by domain, but the principle holds: when the cost of being wrong is real, dependability must be intentional from the start.

Trust is not built on capability alone. It is earned in the moments when AI hands control back to people. Escalation paths, review checkpoints, and deliberate pauses are not safeguards added at the end. They are foundational product choices. We built this into our incident response flow. The AI summarizes, proposes, then stops. A human confirms before anything happens. That pause felt small in testing. In practice, it determined whether engineers were willing to depend on it at all.

Closing the gap between capability and dependability takes more than a better model. It takes thoughtful structure: checkpoints, confidence signals, and correction paths that make mistakes recoverable. That is what turns a powerful tool into something people rely on.