How to scale CI/CD and improve geo-performance in Bitbucket Data Center

The latest in the evolution of smart mirroring.

Over three years ago, we introduced smart mirroring in Bitbucket Data Center to improve git read, especially clone performance, for distributed teams working with large repositories.

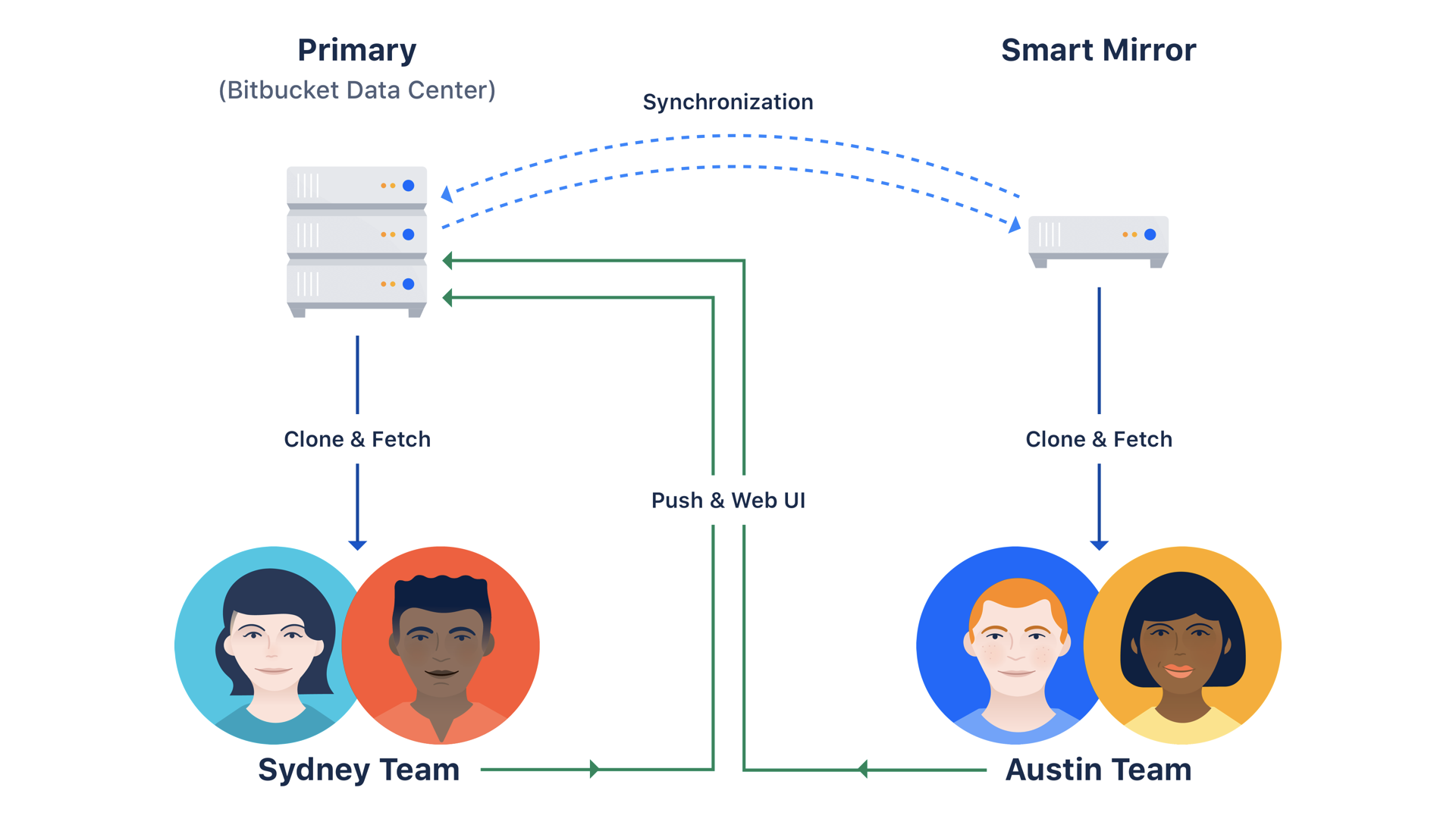

How it works

Smart mirroring allows you to set up one or more live mirror nodes to operate with read-only copies of repositories in remote locations, which are automatically synchronized from the primary instance. One of these mirrors can host all or a subset of the primary’s git repositories. Distributed teams can request from the mirror nodes rather than having to request back to the primary node, significantly reducing the time it takes to get their work done.

Smart mirroring solved many challenges for organizations, like improving git read, especially clone performance. However, as companies became more distributed, the rate at which the number of continuous integration (CI) builds are growing is causing a lot of congestion – slowing down work and sometimes even stopping it completely.

The evolution of smart mirroring

Let’s look at this in practice. Imagine there is a team in India that has set up a nearby mirror. They’ve pointed builds to this standalone mirror, but quickly realize it isn’t scaling. They consider adding another standalone mirror, but this also is not scalable; it requires complex configurations to determine which build clones from which mirror.

To help teams like this, we’ve introduced the latest evolution of smart mirroring: mirror farms that scale and increase CI/CD capacity. This new feature allows users to cluster mirrors into “farms” grouped behind a load balancer to reduce time spent waiting for those build results. Teams can point their builds to a single location (the URL of the load balancer) and add additional mirrors to elastically scale. On top of improved scalability, it also provides high availability; if one mirror in a farm goes down, the remaining mirrors can support the build load.

Atlassian’s migration to mirror farms

A few months ago, we migrated to mirror farms internally to support our ever-increasing number of builds. In our move to shift all our internal CI systems over to mirror farms, we began with some of our smaller Bamboo instances. When that was a success, we decided to move a product that consumes a much larger amount of CI resources. With this move, we did not turn on repository cache pre-warming, which resulted in a significant amount of data and spiked CPU close to 100% on each node.

Despite this, the farm remained stable and available. As we looked to make the migrations even easier, we took advantage of a new REST endpoint (now available in Bamboo 6.10.3) to migrate entire instances at once. With this new endpoint, we were able to move our remaining 10-plus instances without a single incident, and shortly thereafter, we switched all traffic to the main cluster.

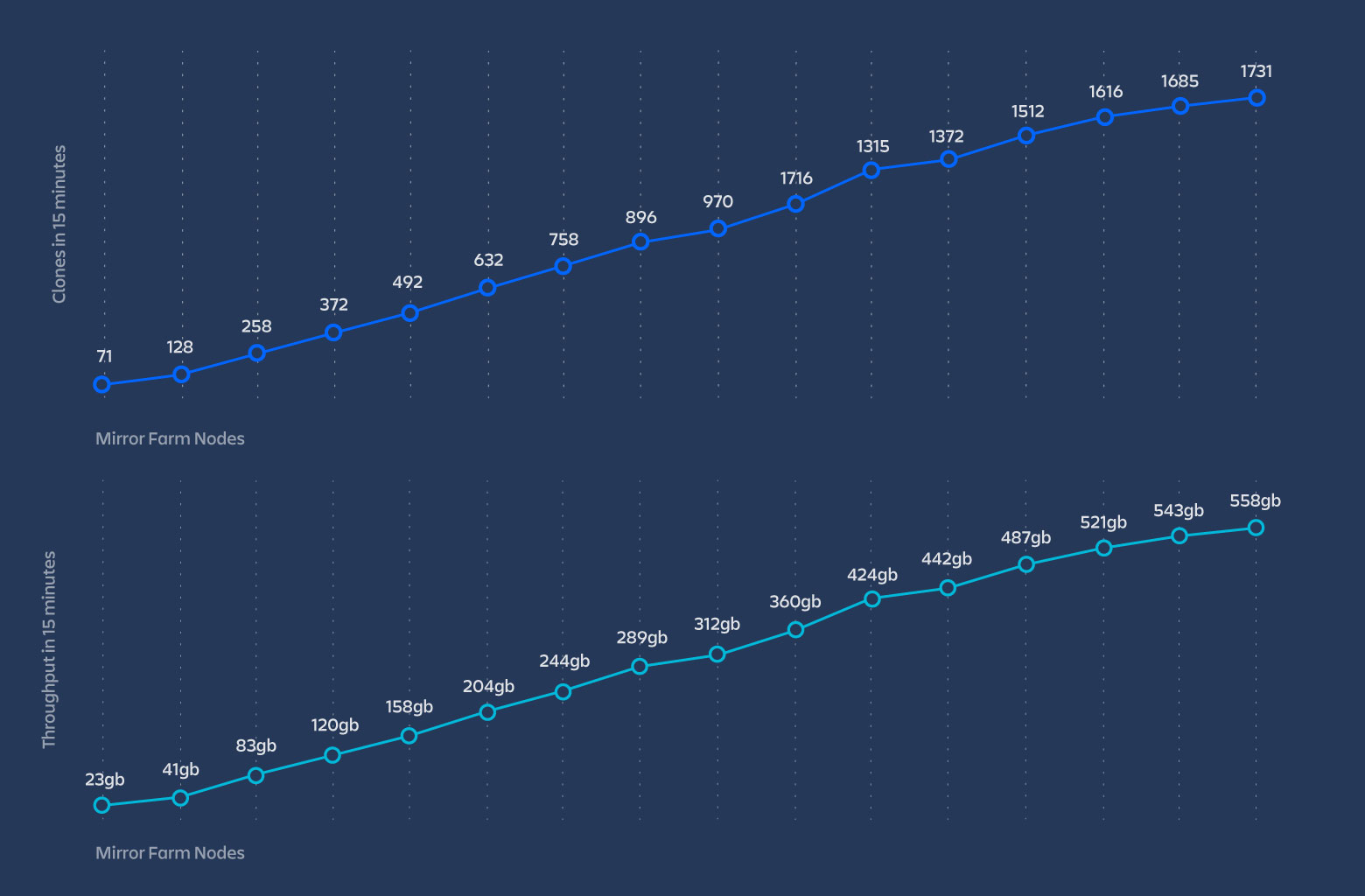

On top of our own experiences, our performance tests also turned up strong results. As you increase the number of mirror farm nodes, the load balancer throughput also increases linearly.

In addition to mirror farms, we also recently added support for any Content Delivery Network (CDN) in each of our Data Center products. As of Bitbucket Data Center 6.8, you can now enable any CDN, public, private, or reverse-proxy, to cache static assets for an additional performance boost for your distributed teams.

To learn more about mirror farms or CDN and how to get started, check out our documentation or watch our webinar.