Rovo Dev code reviewer reviews your pull requests (PR), proactively catching quality, security, and performance issues early while recommending best practices for code quality and maintainability. Recognized by ICSE 2026, code reviewer is observed to reduce 30.8%of the PR cycle time.

Introduction

Code review is a critical pillar of high-quality software engineering at Atlassian. It enables our teams to find bugs early, maintain coding standards, and share knowledge effectively. However, with the rapid growth of our products and the complexity of our codebase, manual code reviews can become a significant bottleneck in our development lifecycle. In today’s fast-paced, context-driven environment, we need ways to help our engineers ship high-quality code faster, without compromising our high standards.

To address this challenge, we developed Rovo Dev Code Reviewer, an innovative and large scale AI software development agent designed to automate parts of the code review process. Code reviewer isn’t meant to replace human judgment; instead, it’s a human-in-the-loop AI partner, designed to augment our engineering workflow. We’re proud to share that after a year-long, large-scale online evaluation across over 1,900 repositories, Rovo Dev has shown significant impact—speeding up our development cycles by 30.8% and cutting human-written review comments by 35.6%. It went Generally Available (GA) on Oct 2025 after receiving widespread positive feedbacks from open Beta customers.

In this blog post, we’ll dive into how we built Rovo Dev Code Reviewer, our human-centric approach to AI automation, and the compelling results from our internal online evaluation.

Our research on Rovo Dev code reviewer’s online evaluation has been accepted by the 48th IEEE/ACM International Conference on Software Engineering (ICSE’26) , a prestigious software engineering conference. This research was a collaboration between Atlassian DevAI engineering team, data science team and associate professor Kla Tantithamthavorn from Monash university. We’re thrilled to share these insights and contribute to the evolving landscape of AI-assisted engineering. This milestone highlights our dedication to innovation and underscores our belief that the future of software lies in the powerful synergy between human expertise and AI.

The Challenges of Manual Code Review

Manual code review is invaluable, but it has inherent challenges:

- Time-consuming: Engineers can spend hours reviewing code, reducing their time for new feature development.

- Bottleneck for fast shipping: Delays in code reviews can slow down the deployment of features and bug fixes.

- Potential for human error: Reviewers may miss subtle bugs, inconsistencies, or style issues.

- Context switching: Engineers have to pause their coding flow to review pull requests (PRs).

Our goal with Rovo Dev Code Reviewer was to create an intelligent assistant that could handle the repetitive, context-independent parts of code review, allowing our engineers to focus on the high-level logic, architecture, and complex design problems that truly require human expertise.

Designing Rovo Dev Code Reviewer: Zero-Shot, Context-Aware, and Quality-Checked

From the very beginning, privacy and data security were our paramount concerns. For our enterprise customers, data protection is a non-negotiable requirement. We rely on a zero-shot structured prompting approach that is augmented with readily-available contextual information (i.e., pull request and Jira issue information) in a structured design with persona, chain-of-thought, and review guidelines.

Rovo Dev Code Reviewer is built on top of a powerful LLM (Anthropic’s Claude 3.5 Sonnet) and is seamlessly integrated into Bitbucket. It’s composed of three key stages that ensures high-quality and reliable results:

1. Review-Guided, Context-Aware Comment Generation

The start of our approach is a sophisticated prompt that instructs the LLM on how to perform a comprehensive code review. This prompt is carefully structured with several vital components:

- Persona Definition: We assign the LLM a persona, instructing it to act as an experienced Atlassian software engineer.

- Task Definition: Clear and explicit instructions are given to the LLM about the code review task for a specific PR.

- Chain of Thought (CoT): We guide the LLM through a step-by-step reasoning process before it generates any comments. This helps the model “think aloud,” ensuring logical consistency.

- Structured Review Guidelines: Our prompt includes well-crafted engineering guidelines for code, test files, and comments. This ensures that all generated comments align with the highest standards of enterprise quality and best practices.

- Rich Contextual Information: We feed Rovo Dev Code Reviewer with a comprehensive set of context, including:

- PR title and description (high-level intent and summary)

- Related Jira issue summary and descriptions (business motivation and requirements)

- The complete code change (diffs)

This combination of structured instruction and comprehensive context enables Rovo Dev to understand the purpose of a pull request and provide meaningful and relevant feedback.

2. Comment Quality Check on Factual Correctness

One of the biggest issues with LLMs is the potential for hallucination—generating comments that may be inaccurate, inconsistent, or non-sensical. To combat this, we added a second stage: a dedicated “LLM-as-a-Judge” component based on a cheaper model (gpt-4o-mini). This judge acts as a gatekeeper, reviewing every generated comment for factual correctness against the code change. If a comment is hallucinated or factually incorrect, it’s filtered out. This stage ensures that we only post valid and reliable feedback to our engineers.

3. Comment Quality Check on Actionability

Even factually correct comments can be unhelpful if they are vague or non-actionable (e.g., “This needs improvement” or “Add a blank line here”). To filter out this noise, we developed our final stage: a ModernBERT-based comment quality check on actionability.

We fine-tuned ModernBERT, an encoder-only model known for its memory efficiency and long context length, using a proprietary internally sourced dogfooding dataset of over 50,000 high-quality, Rovo Dev-generated comments. We trained this model to classify comments based on whether they led to a code resolution—a clear, actionable suggestion that triggered a code change. This ensures that Rovo Dev code reviewer prioritizes comments that are genuinely useful and likely to drive code resolution. More details on how we trained the model can be found in his blog.

Atlassian’s Human-AI Collaboration Approach

Rovo Dev code reviewer isn’t an autonomous agent; it’s a human-in-the-loop AI software development agent. This is a core part of Atlassian’s philosophy for the future of teamwork. AI should empower, not replace.

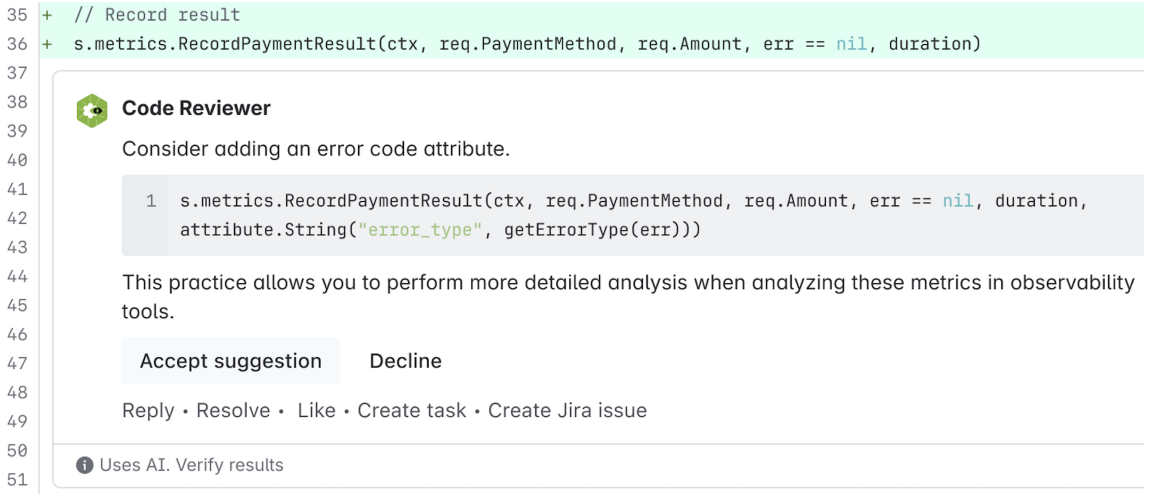

Rovo Dev works alongside our engineers, performing the initial “first pass” review. It posts comments with suggestions and bugs, but the final decision to accept or decline a suggestion rests solely with the human reviewer. This approach:

- Builds trust: Engineers can verify AI suggestions before accepting them.

- Maintains accountability: Human engineers are always the ultimate decision-makers.

- Respects human autonomy: Engineers remain in control of their development process.

- Supports iterative improvement: Human feedback is used to refine and improve the AI over time.

Rovo Dev’s suggestions can be accepted with a single click in Bitbucket, making it easy and seamless to integrate into an engineer’s existing workflow.

Large-Scale Online Evaluation at Atlassian

After extensive internal testing and “dogfooding,” we deployed Rovo Dev Code Reviewer at scale. Our evaluation, spanning over a year and across 1,900+ repositories, demonstrated compelling results:

Key Findings:

- Accelerated Development Cycle: We observed a significant 30.8% reduction in median PR cycle time. This means features and bug fixes get to production faster.

- Reduced Workload: Rovo Dev helped reduce the number of human-written comments by 35.6%, freeing up our engineers to focus on more complex tasks.

- High-Quality, Resolved Comments: 38.70% of Rovo Dev-generated comments led directly to code changes in the subsequent commit. While this is less than the resolution rate for human comments (44.45%), it is a strong indicator of Rovo Dev’s ability to provide high-value, actionable suggestions. Readability, bugs, and maintainability were the most resolved comment types.

- Positive Engineering Perception: Qualitative feedback from our engineers was overwhelmingly positive. Rovo Dev code reviewer was praised for its accuracy in finding nuanced bugs as well as subtle errors (e.g., duplicate method names) and also for its actionable suggestions.

Conclusion

We are thrilled with the impact Rovo Dev Code Reviewer is having on our engineering team. It has already made a substantial difference by accelerating our development process and reducing the manual effort of code review. By taking a human-centric, quality-checked, and context-aware approach, we have successfully built an enterprise-grade AI software development agent that our engineers can rely on. Rovo Dev is not just a tool; it’s a partner that empowers our teams to ship better software, faster.

Our work is far from done. We are continually refining Rovo Dev by investigating advanced context enrichment techniques and improving its agentic capability. We are excited about the future of AI-powered software development at Atlassian and are committed to creating tools that empower teams to do their best work.

Finally, we would like to acknowledge the important contributions of the DevAI engineering, data science, product, and design teams, whose collaborative efforts made both Rovo Dev code reviewer and this publication possible.