How it all started

Early last year (2025), I spent a few hours manually improving mutation coverage for one class.

Fast-forward to team’s innovation week (Sept 2025), where AI was the focus. I began experimenting with Rovo Dev CLI, asking a simple question:

“Can an AI assistant read a mutation report and actually write the right tests for me?”

What began as a small experiment quickly turned into a reusable Mutation Coverage AI Assistant that multiple teams now use to reliably push projects towards 80%+ mutation coverage—without drowning in manual analysis and boilerplate tests.

This blog is the story of that journey:

why we built it, how it works, what impact it had, and what we learned along the way.

Why we needed an AI solution

Improving mutation coverage is critical for test quality—but painful at scale.

Why mutation coverage is hard (especially at project level)

- For a single class, writing a few mutation-killing tests is manageable.

- For “x” classes across an entire project, it quickly turns into:

- Long hours understanding business logic in different modules.

- Repeatedly reading through dense mutation reports full of mutant jargon.

- Manually deciding which class/package to tackle next.

Why LLMs are a great fit here

We realized this problem is a sweet spot for LLMs:

- Low risk:

- If an LLM writes a wrong test, you see failures immediately.

- Developers can fix or discard them with little downside.

- High effort:

- Writing tests after the initial ones is often mundane.

- Understanding business logic at scale, and mapping it to tests, is time-consuming.

- Great at report analysis:

- Mutation reports are long and detailed.

- LLMs handle this kind of structured-but-verbose input surprisingly well, if guided correctly.

So we asked: What if, instead of prompting an LLM multiple times, we gave it a complete end-to-end workflow to follow?

Meet the Mutation Coverage AI Assistant

The Mutation Coverage AI Assistant is a set of workflow instructions that guide an LLM through the entire process of closing mutation coverage gaps for a project.

Instead of you doing everything manually, the assistant:

- Analyzes mutation reports for a project or package.

- Recommends which classes to target next.

- Writes tests aligned with your project’s test conventions.

- Validates whether those tests actually improve mutation coverage.

- Lets you stay in control at each critical step.

Mutation Coverage AI Assistant launched via Rovo Dev CLI

You can think of it as a Dev-in-the-loop AI copilot specifically tuned for improving mutation coverage.

How is this different from just using other AI tools or manual prompts?

Many of us have already tried:

- Manually prompting LLMs (“Generate tests for this class to kill xyz mutant”).

- Using

/testsor similar commands in AI plugins within IDEs.

The Mutation Coverage AI Assistant is designed to go beyond that.

Comparison of approaches

| Solution | Manual Prompts to LLMs | Test tools in AI plugins (e.g., /tests) | Mutation Coverage AI Assistantthis solution |

|---|---|---|---|

| Description | You manually craft prompts for each step to improve mutation coverage across a project. | /tests (or similar) auto-generates tests for a given class. | Load the AI assistant using Rovo Dev CLI and then provide inputs as requested. |

| How it addresses mutation coverage gap for a project | Easy to start, but every step requires new prompts. LLM behavior is unpredictable with no guided workflow, so time to close gaps can be high. | Generates tests, but has no awareness of mutation reports before/after. Tests may not meaningfully improve mutation coverage. | Follows a guided automation approach: generates mutation reports, recommends which class/package to target, and supports overrides. Designed to reduce total time to close project-wide gaps. |

Why it works across different projects

Adaptive on different projects

The assistant is project-aware:

- It first auto-learns test conventions and mocking frameworks used in your project.

- It then follows those patterns when generating tests.

This means:

- You don’t need to hard-code per-project rules into the prompt.

- Tests feel “native” to the codebase instead of out-of-place LLM code.

Dev-in-the-loop AI Assistant (Guided Automation)

We deliberately chose Dev-in-the-loop over full autonomy.

Improving mutation coverage across projects owned by multiple teams requires human judgment at key steps:

- Prioritization:

- AI can propose which class/package to target based on the mutation report.

- But that might not align with business priorities or critical paths.

- Implementation details:

- The AI might not know about internal frameworks used specific to the project.

- It may occasionally try to modify implementation classes or add noise without strict instructions.

If we had let the AI run fully autonomously across “x” classes:

- Unpredictable behavior could have created more clean-up work than manual improvements.

- It would be harder to recover from hallucinations or subtle mistakes.

With Dev-in-the-loop:

- The assistant proposes; you approve, adjust, or stop.

- Teams can iteratively refine prompts as they see AI mistakes in real PRs.

Auto-validation of tests

One of the most important lessons:

test generation is not enough—you must check if coverage actually improves.

The workflow explicitly instructs the LLM to:

- Implement tests.

- Rerun mutation tests.

- Verify whether mutation coverage % improved.

This auto-validation step:

- Catches cases where generated tests do not kill additional mutants.

- Dramatically reduces noise in AI‑generated tests and surfaces the most valuable cases.

- Reduces the burden on developers to manually correlate tests with coverage changes.

From experiment to real impact across teams

Early Adopters

We first used this assistant on few selected projects to get early feedback on its usage.

Positives

- Easy to use.

- Much faster to improve mutation coverage than manual approaches.

Improvement areas

- Remove unnecessary comments.

- Avoid wildcard imports.

- Avoid redundant assertions.

Despite those rough edges, the impact was clear:

- We achieved 80% mutation coverage targets on all the projects with significantly less manual effort. Time savings reach 70% compared to improvements achieved via manual means.

Mutation coverage improvement data

| Project | Before | After |

| jira-project-a | 56 | 80 |

| jira-project-b | 70 | 88 |

| jira-project-c | 83 | 96 |

| jira-project-d | 71 | 80 |

| jira-project-e | 84 | 90 |

Expanding to more projects

As confidence grew, we opened it up to more teams:

For a migration project in Jira, which had hundreds of classes, ~1500 mutants were killed using this solution with significantly less time spent. This became a strong proof point that the solution could handle large projects.

Lessons Learned

1. Larger projects: High mutation report generation time

Problem

On large projects with many classes/packages, generating a mutation report for the entire project could take >10 minutes.

- If multiple developers wanted to work on the same project, all of them hit this bottleneck.

- Every run of the assistant required a fresh full-project report, slowing down feedback loops.

What we changed:

Enhanced the workflow to support package-level mutation analysis:

- When starting the assistant, devs now specify:

- Project name.

- Java package name. (Optional)

- The assistant:

- Runs mutation analysis only for that package.

- Generates reports much faster.

- Enables multiple devs to work on different packages in parallel.

This shift significantly improved adoption on large projects.

Also, enabled multi-threading for faster mutation report generation.

2. LLM limitations: when instructions weren’t strict enough

Problem

Without crystal-clear instructions for test implementation step, the assistant sometimes generated problematic changes:

- Attempted to modify implementation classes.

- Used wildcard imports.

- Left behind unused imports after editing tests.

- Added meaningless assertions, e.g.,

obj.equals(obj). - Inserted noisy comments like:

===== NEW COMPREHENSIVE TESTS TO KILL REMAINING X MUTATIONS =====

What we did: tighten the workflow instructions

We updated the workflow to include explicit guidelines to avoid these mistakes.

Result:

- These issues were largely eliminated in subsequent runs.

- The assistant’s output became much more PR-ready.

3. Generic “kill mutants” instructions led to unwanted, redundant tests

Problem

With generic instructions like “Write tests to kill mutants, to improve mutation coverage”, the assistant sometimes generated excess tests to kill the remaining mutants.

This caused:

- Extra noise in PRs and increased review effort.

- Little added value once critical mutants were covered.

What we did: Added detailed, mutant-specific test-writing guidelines

To address this, we:

- Modified the workflow instructions to include detailed guidelines on how to kill each mutant type, rather than relying only on the generic “kill mutants” prompt. This included the details about:

- The different types of mutants present in a mutation coverage report.

- How to write targeted tests for each mutant type.

Result:

- The AI started generating more focused, higher-quality tests.

- The number of unwanted or redundant tests reduced.

4. Bloated classes: AI can’t fix design issues

Challenge

For classes with too many responsibilities:

- The assistant struggled to generate effective tests to significantly improve mutation coverage %

- Even with good prompts, coverage improvements plateaued.

Learning

Observation:

- In well-structured projects (clear separation of responsibilities),

the assistant could easily push mutation coverage above 80%. - In projects with bloated classes, coverage often stalled around 60%.

Learning:

- Developers still need to refactor these classes to unlock the full benefit of the assistant.

5. Enhancements around the clock: prompts as evolving artifacts

Challenge

The first version of the prompt instructions was far from perfect.

- As more developers used the assistant, they uncovered edge cases and issues.

- Without an easy way to evolve the prompts collaboratively, the assistant would have stagnated.

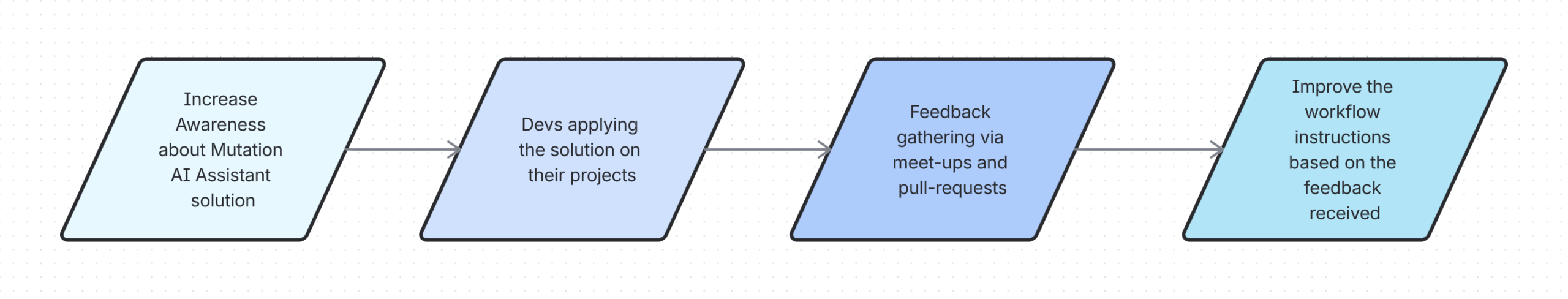

What we did: continuous improvement

- Feedback channels:

- Regular team meet-ups.

- PR reviews of AI-generated tests.

- Implementation:

- Hosted the prompt instructions in Bitbucket repository.

- Made it easy for teams to propose and contribute improvements.

This collaborative model turned the assistant into a constantly improving system that keeps getting better with real-world use.

6. Team collaboration

This became a great example of cross-team AI collaboration:

- Mutation champs from each team increased awareness of the AI assisted solution.

- Adopted the assistant on their projects.

- Shared feedback and contributed to the improvements which helped all the teams in their subsequent usages.

Current Challenges

Generating too many tests than required

We addressed this by adding specific guidelines for killing each mutant type. Initial results are promising but require more usage to confirm.

LLM Model choice selection

Model choice could have an impact on test quality. Developers should know which model is in use, and override defaults when needed to match their project’s needs.

Pragmatic usage of AI tools

Developers must recognize when to stop if the AI session is unproductive. Due to LLM limitations, sometimes the AI tool may stop writing tests after miscalculating mutation coverage %. It may also get stuck in an endless loop writing tests without improving coverage. Restarting the AI session resets the LLM memory context and can help. If the hiccups still persists, this requires proper fixing of workflow instructions.

What’s next?

As adoption grows and the prompt instructions mature, this could naturally extend to more autonomous AI agent use cases. For now, we’re intentionally keeping Dev-in-the-loop to ensure that:

- Developers stay in control of the changes.

- AI boosts productivity without causing extra cleanup from mistakes.

Credits

- Huge thanks to everyone who helped shape and scale this solution — from designing the initial workflow and refining prompts to hardening it for use across multiple projects.

- Grateful to the early adopters who trusted the assistant on their projects and shared candid feedback that helped us extend the approach beyond the initial pilot.

- Thanks to the Mutation Champs who spread awareness of this solution, encouraged adoption in their teams, and continuously surfaced insights from day-to-day usage.