The future is already here – it’s just not evenly distributed.”

William Gibson said this in 1984, but it’s very much where we are with AI today.

AI tools for everything from coding generation to editing video have crash-landed into our workflows. Autonomous agents are just as capable as interns (or are they?).

The race is on to become AI-native. But for many, it feels more like turbulence than a rocket launch. We’re all trying to harness the new product superpower – but how do we know if it’s working?!

We don’t have any quick fixes. But through many in-depth conversations with product leaders, we have uncovered some patterns – some that drive us forward, and some that hold us back.

Today, we’re unpacking how leaders and teams can thrive in this uncertain era. That starts with understanding behavior: what it means to be AI fluent, and how to avoid surface‑level ‘AI theater’ that only makes change look transformative without delivering real impact.

We’re featuring voices from our podcast, Product in Practice, where we dive deep with product leaders experimenting in real workflows.

What is AI theater?

As with any new technology wave, a few familiar traps are already showing up in how teams approach AI. Watch out for these anti-patterns that hold back progress:

- Tool tourism: Collecting logins like souvenirs, without changing how work actually gets done. Think “we tried this one, and that one too.’ The illusion of progress masks the absence of impact.

- Automation theater: Glossy demos that wow in a team meeting but collapse when asked to live inside the backlog or the customer workflow. They look transformative, but they don’t survive contact with everyday reality.

- Prompt gatekeeping: Withholding knowledge, prompts, or workflows to protect personal value. This hoarding of tricks slows down collective learning and keeps wins from compounding across the team.

These challenges are just symptoms: of the transition from individual craft to organizational capability.

That shift can be destabilizing, but also profoundly liberating, depending on how leaders hold it. That’s why they need a shared vision to guide teams towards. Enter AI fluency.

What is AI fluency?

Fluency lives between these extremes of problematic behavior.

It’s not that you can’t try new tools, launch snazzy demos, or experiment on your own. It’s about approaching AI with grounded curiosity: try with intent, sharing what you learn, and pointing experiments at outcomes that matter.

AI fluency isn’t technical mastery. It’s the practical confidence to use AI to think, make, decide, and know when not to. If proficiency is “I can execute tasks,” fluency is “I can converse, critique, and compose with this medium.”

AI fluent teams share a few traits:

- They ask better questions of AI, moving from “do this task” to “help me reason about options,” “surface trade‑offs,” or “generate counter‑examples.”

- They understand limits and biases, treating outputs as proposals to be interrogated, not truths to be obeyed.

- They integrate outputs into real workflows, connecting drafts, analyses, and prototypes to the tools and rituals where work actually moves forward.

- They normalize experimentation. Trying, failing, and learning are part of the operating system, not extracurriculars.

But there’s a catch – there’s no easy path to get there. Not every experiment moves teams forward, and that’s ok. Some patterns are useful, others are distractions. Leaders must be prepared to support and make space for all of them.

“Leaders can’t shortcut fluency,” says Ravi. “It’s not about buying a tool; it’s about building confidence, one workflow at a time.”

🧪The Antidote: tips and frameworks for AI success without the drama

Access, expectation, challenge

Ravi, Product Advisor and former CPO at Tinder, suggests that AI fluency depends less on individual skill and more on the culture leaders create around it. He points to three levers that shape that culture.

Ravis starts with access. He has watched teams stall when experimentation requires workarounds or shadow logins. “If every experiment needs a procurement request, people just give up,” he says. The easier it is to reach for AI inside everyday tools like Slack, Figma, and Jira, the more likely experiments will stick.

Next is expectation. Leaders send a powerful signal just by asking the right questions. “The fastest way to show that AI belongs in the workflow is to bring it up,” says Ravi. When managers regularly ask, “How did AI help here?” or “What could we try with AI next time?”, it frames AI use as a norm rather than a novelty.

The third lever is challenge. Ravi encourages leaders to raise open-ended prompts: “How could we do this faster with AI?” or “What part of this workflow could we reimagine?” He stresses the importance of recognizing effort as well as outcomes.

“Fluency grows when people feel safe to share the half-finished attempts. That’s how you discover the surprising wins.”

Ravi Mehta

Ravi’s model is less a checklist and more a set of cultural levers leaders can keep pulling. Together, they create the rhythm where experimentation feels normal, and confidence has space to compound.

Takeaway for leaders

To accelerate fluency, make AI easy to reach, set the expectation it will be used, and keep challenging teams to stretch.

The 3 Ps: People, process, platform

Kene Anoliefo has led product and design at companies like Google, Spotify, and Netflix, and now focuses on helping teams thrive in the AI era.

The moment someone realizes their value is in enabling ten others, the team moves faster.”

Kene Anoliefo

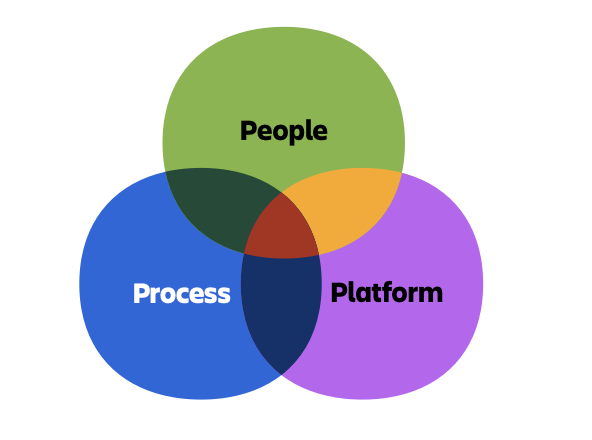

Kene suggests thinking about adoption through the 3Ps:

People: For many designers, PMs, and engineers, the quiet question is, “Who am I if AI does this part of my craft?” Kene invites a shift from gatekeeper (guarding knowledge) to architect (designing systems others can use). Think documenting a workflow, recording a five-minute Loom, or pairing with a peer to replicate a result.

Process: AI changes the speed and shape of work, which means quality norms can’t stay static. “Is 75% quality acceptable if it’s 50% faster? That’s a leadership call,” Kene says. Make the trade-offs explicit so speed doesn’t erode trust and perfectionism doesn’t stall momentum.

Platform: Tools are tempting, but Kene warns against starting there: “People reach for platforms first. But if you don’t align identity and process, you end up with expensive shelfware.” Platforms should amplify strategy, not precede it. She advises leaders to resist the gravitational pull of shiny tools until the organization knows how it wants to use them.

Kene stresses that the 3Ps are an interdependent system.

When adoption stalls, it’s usually because one of the 3Ps is underfed. Maybe people don’t feel safe, process norms are fuzzy, or the platform landed before the ground was ready. Diagnose that, and fluency starts to grow again.”

Kene Anoliefo

Takeaway for leaders

Recognize new role models on your team. Celebrate architects who enable many, rather than gatekeepers who guard a few tricks.

Incentivize sharing over hoarding

Gatekeeping slows the compound gains of AI. What if your performance rubric explicitly rewarded “architect” behaviors, like described in Kene’s 3P framework?

Gatekeeping rarely starts maliciously – it usually begins with people trying to defend their value in murky territory. Leaders’ job is to change the incentive ladder so the most rewarded identity is the person who documents, demos, and teaches.

Try offering small, visible rewards like recognition in team meetings, or a tip callout in your internal newsletter. Over time, these can build a culture of learning and change how your team views status.

Measure adoption progress

Beware of vanity metrics on your AI journey. Some numbers look impressive, like total prompts run or tokens consumed, but don’t connect to customer value or team learning.

Measurement should clarify, not police. The point is to see whether fluency is changing how your organization learns and ships. Treat metrics as conversation starters: a way to notice what to amplify or adjust.

Start with your baseline. Then, blend leading and lagging indicators.

Before a big AI push, capture a baseline. How many people are using AI weekly, where does work slow down, how consistent is quality? Even a rough baseline makes change visible later.

Leading indicators tell you if behavior is changing:

- Fluency: Track the share of the team that uses AI weekly for core tasks, the number of shared workflows in circulation, and the spread of contributions (is it the same three enthusiasts, or broadening?). Look for narrative signals too: how do people describe the value — speed, clarity, exploration?

- Culture: Use short pulse surveys to sense anxiety, confidence, and psychological safety around AI. You might ask people to rate how much they agree with a statement like, “I feel comfortable sharing an AI experiment that didn’t work.”

Lagging indicators tell you if outcomes are improving:

- Velocity: Watch cycle times on repeatable tasks (spec drafts, QA summaries, weekly updates). You’re not chasing speed at any cost; you’re checking whether reclaimed time is being reinvested in higher-value work.

- Quality: Check whether outputs match product strategy and customer truths. Does the “voice of the product” (the tone, style, and perspective that make it recognizable) hold steady when AI is in the loop? Because quality is measured after the fact, you need safeguards in place: context libraries to anchor outputs, and light reviews to catch drift early.

Cadence matters. Review indicators monthly to steer behavior, and quarterly to make bigger calls, like which pilots adopt into ongoing use.

Finally, always share what you learn. Fluency spreads faster when people can see the story their data tells.

Build context libraries that make quality scalable

A context library is a living set of references that both people and AI can draw on to keep quality consistent. The easier it is to find and use context, the more consistently teams produce high-quality work, and the faster fluency grows.

Capture product strategy, design principles, domain language, and customer truths in shared documents, and build processes to make sure they stay updated. The goal is to make implicit goals, norms, and standards explicit, so that everyone benefits.

Ironically, standardizing quality can also help organizations stay differentiated. Without a clearly codified vision, teams risk converging toward sameness. Elena Verna, Head of Growth at Lovable, explains: “If everyone leans on AI averages, we all end up building the same product. The human job is to set a distinct destination.”

Go deeper on AI fluency

We have so much more to say about this seismic shift in how we work, collaborate, and build great products together.

In our new ebook, AI fluency: The new product superpower, we’ve gathered even more insights from these experts on how AI is changing our roles and evolving expectations – plus practical advice on how to adapt.

Download the guide for free and continue your AI fluency journey.