If you’re a PM or PMM, your research cycle probably looks something like this:

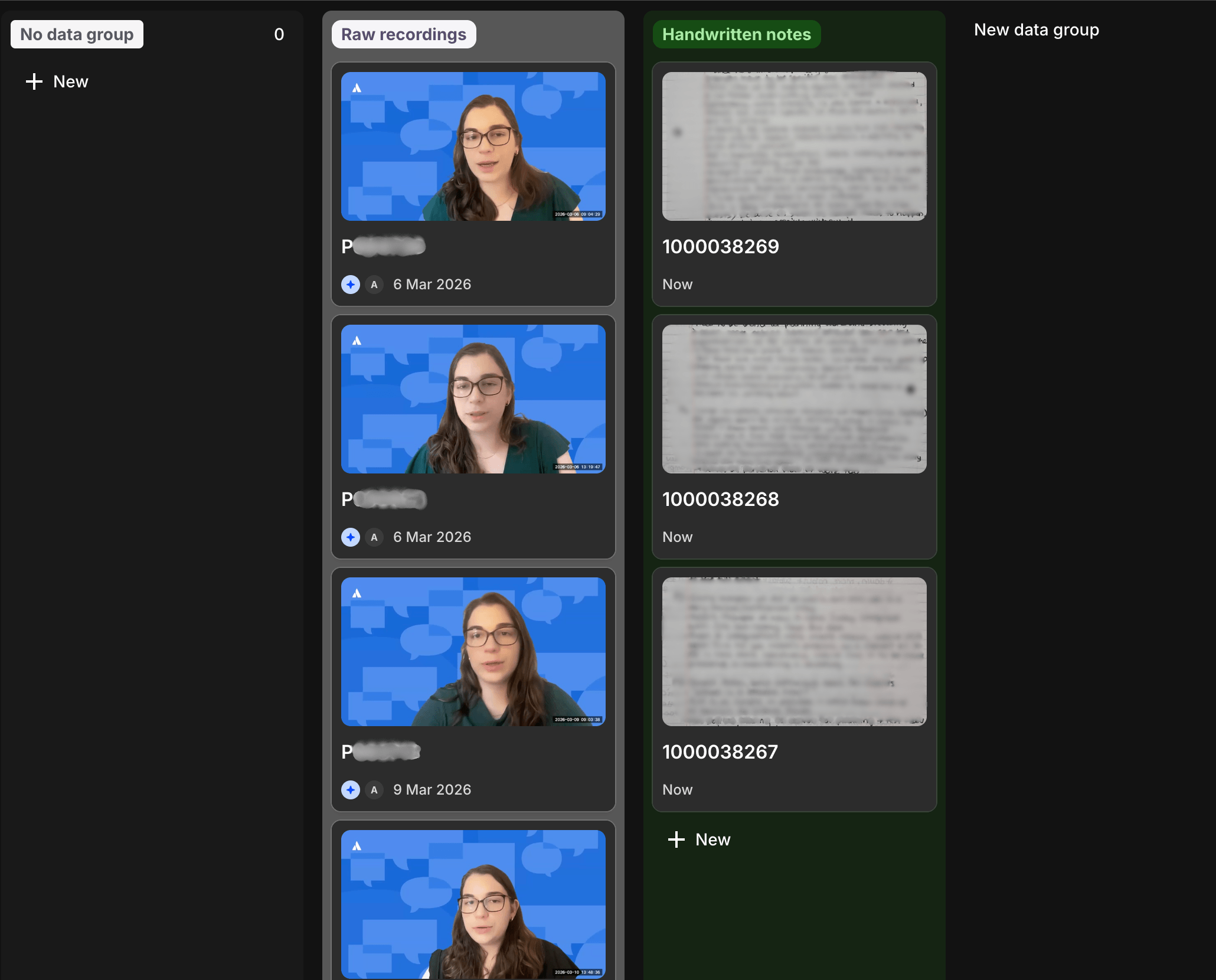

- Interviews and surveys land in a tool like Dovetail

- You promise yourself you’ll “just take a day” to organize everything

- A week later, you’re still tagging, finding quotes, and rewriting bullets for a deck

- By the time your findings are polished enough to share, the moment to act is already closing

We recently ran this entire cycle completely asynchronously in a single afternoon, and ended up with an interactive, stakeholder-ready research report. It was all about changing how we spent our time and seeing what actually changes when AI is part of the whole research workflow, from raw interviews to a report that stakeholders will read and trust.

The study we ran

We ran a research study to talk to customers directly about how they manage work today. We’d already used AI to speed up market research, persona work, and planning from weeks to days. The next test: could we do the same for primary research synthesis, without losing nuance?

After speaking to customers, our interview recordings were ready in Dovetail. The strategic context, including our research plan, customer hypotheses, and competitor positioning, was already documented in Confluence. The question wasn’t “Can AI summarize our interviews?”

It was: Can AI help us connect all of this fast enough to inform real decisions, while keeping humans in charge of judgment?

Step 1: Connect your research data directly

The first unlock was connecting tools instead of copy‑pasting between them. Using the Dovetail MCP server, we let Rovo Dev pull:

- Full interview transcripts

- Existing highlights

- Tags and themes we’d already applied

What normally would have been two or three days of transcript review was available in minutes.

For teams, this step is simple but important: AI works best when it’s plugged into the system of work you already use.

Step 2: Give AI strategic context, not just your transcripts

This is where most teams underuse AI.

A generic prompt like “summarize these interviews” will give you…a summary. It’s rarely what stakeholders need.

Instead, Rovo Dev got the full strategic context before touching a single transcript:

- Our research plan and discussion guide

- The specific hypotheses we were testing about our customers

- Competitor positioning and what we already believed about the market

With that context, the synthesis looked completely different. Rather than a list of “things users said,” Rovo Dev:

- Mapped findings directly against our hypotheses

- Flagged which hypotheses looked supported, challenged, or unresolved

- Pulled quotes and patterns as evidence for each claim

- Caught validation signals easy to miss in a manual pass

- Highlighted gaps we hadn’t probed deeply enough

The human layer stayed with us: Which patterns matter? What should we cut? Where are we still not confident? But the raw material for that decision arrived in hours instead of a week of back-and-forth.

Step 3: Review in Confluence, refine in real time

Once Rovo Dev generated the first draft, we moved into a familiar Atlassian rhythm: collaboration and feedback in Confluence. And we discovered new benefits in having Rovo write that first draft:

- We critiqued everything from strategy and positioning to depth of interview evidence

- We felt free to be hard on the work without worrying about being hard on a writer

- Our level of pressure-testing made the final insights much sharper

The review process surfaced real issues, like overly confident framing for a relatively small sample, places where the tone undersold a genuine finding, and nuance from handwritten interview notes (which we added pictures of to our prompts!) that hadn’t made it into the synthesis.

Once we’d left all our feedback, Rovo responded in seconds, which meant we could keep momentum:

Comments → revision → another pass of human judgment, all in the same afternoon.

For your team, this is the shift: AI becomes the fast rewriter and formatter. You stay the editor, setting the bar for quality and accuracy.

Step 4: Restructure for your audience

Our first AI-assisted draft was comprehensive but looked like most working docs:

- Raw quotes mixed with to‑dos

- Insights buried under detailed notes

- No clear path for an exec skimming between meetings

We pulled up a polished research report from a previous project as a reference template and asked Rovo Dev to restructure the doc into something stakeholders could actually use:

- Research brief – method, participants, strategic context

- Key themes – five scannable headlines

- Numbered insights – each with “what users experience / need / say”

- Hypothesis validation – how findings mapped back to our questions

- Experiment recommendations – prioritized bets with hypotheses and success criteria

- Implications – actionable takeaways for stakeholders

Reformatting a 5,000-word working document into a polished report usually takes half a day of careful editing. This took about 30 minutes of guided restructuring.

Step 5: Make the research travel across your company

A detailed research report no one reads is just a very organized archive. We used the report as our single source of truth and then asked Rovo Dev to help us spin it into:

- A tight, eye-catching executive summary

- A few Slack posts tailored to different audiences and channels

Those Slack posts reached teams who would never have gone looking for the research report on their own. They sparked:

- Follow‑up conversations from stakeholders with extra context

- Links to related projects we could plug into

- A feedback loop that made the research more valuable than if we’d quietly filed it away

This is where AI can scale your influence: you can ship more versions of the same insight, faster, to more of the right people.

What AI actually did well

In this workflow, AI wasn’t “doing research for us.” It was:

- Structuring and reformatting. Taking a messy working doc and applying a consistent template, without losing content, is not glamorous work. It’s also not work that requires human judgment. AI did it well and fast.

- Connecting quotes to frameworks. When given clear strategic context, Rovo Dev mapped user evidence to hypotheses more thoroughly than a single person reviewing transcripts over two days would. It caught patterns we hadn’t considered.

- Revision from specific feedback. Taking Confluence comments and producing targeted rewrites, not starting from scratch and not rewriting sections that weren’t flagged, was accurate and fast.

Where humans still did the hard work

- Deciding what matters. AI surfaced more than 20 potential insights. Choosing the ones that told the right story required judgment about strategy, audience, and what would actually move decisions. That was entirely human.

- Calibrating confidence. LLMs tend to sound confident even when the evidence is thin. Knowing when a finding was “suggestive” versus “validated,” especially with a smaller sample, required someone who understood the research design and its limits. A small set of interviews can reveal a lot, but they can also mislead if you don’t consider the context.

- Turning findings into implications. AI can summarize what users said. Deciding what to do about it, which bets to make, what to deprioritize, how to sequence experiments, and what messaging to go to market with is still where PM and PMM judgment lives. The research report is an input to that decision, not the decision itself.

The real shift: how your time gets spent

In a traditional cycle, PMs and PMMs often spend:

- ~70% of their “research time” on gathering, reading, tagging, and formatting

- ~30% on actual thinking, alignment, and decisions

With this workflow, our split looked closer to:

- 30% gathering and synthesizing (with AI doing the heavy lift, humans guiding and correcting)

- 70% thinking and deciding (the work that actually requires experience and context)

We didn’t skip steps. We just gave the low‑leverage parts to AI, which doesn’t get tired of reformatting.

How to try this with your next study

If you want to apply this pattern on your own team, a simple checklist:

- Keep your research in tools your AI can see

Connect interviews (e.g., Dovetail) and context (e.g., Confluence, Jira) rather than working off exported docs. - Start by feeding AI your brief instead of just your transcripts

Share your research plan, hypotheses, and relevant positioning before you ask for synthesis. - Treat AI as a fast first drafter, not a decision-maker

Let it propose structures, insights, and rewrites. Reserve final judgment for humans. - Use templates for reports and updates

Decide your report structure upfront and have AI reshape content into that skeleton. - Plan for distribution early

Assume you’ll need: a full report, an exec summary, a few Slack posts, and maybe a blog or deck. Let AI help with all of them from the same core source.

Why this matters in the AI era of teamwork

The next era of work isn’t about producing more output with AI. The real advantage is better alignment, sharper judgment, and more confident decisions.

Across our research and competitor analysis, a few patterns are clear:

- Teamwork is the differentiator. AI is most powerful when it connects people, tools, and context.

- Trust and accountability matter. The winning workflows are explicit about how teams validate AI output and who owns the final call.

- Structure wins. Skimmable, well-framed reports serve humans and make it easier for AI and search to surface your work accurately.

AI won’t replace the hard parts of research or product decisions. But when you design your workflow and content around human–AI collaboration, you get more leverage on the work that actually moves the needle.