不同类型的软件测试

比较不同类型的软件测试,例如单元测试、集成测试、功能测试、验收测试等!

Sten Pittet

特约作家

您可以使用多种类型的软件测试技术来确保对代码的变更按预期运行。但是,并非所有测试都是平等的,我们将探讨一些测试实践有何不同。

手动测试与自动测试

区分手动测试和自动测试很重要。手动测试是亲自完成的,方法是点击应用或使用适当的工具与软件和 API 进行交互。这非常昂贵,因为它需要有人设置环境并自己执行测试,而且很容易出现人为错误,因为测试人员可能会在测试脚本中打错字或省略步骤。

另一方面,自动化测试是由执行预先编写的测试脚本的计算机执行的。这些测试的复杂程度可能有所不同,从检查类中的单个方法到确保在 UI 中执行一系列复杂操作会得到相同的结果。它比手动测试更强大、更可靠,但是自动化测试的质量取决于测试脚本的编写情况。如果您刚刚开始测试,可以阅读我们的持续集成教程,帮助您开发第一个测试套件。正在寻找更多测试工具?查看这些 DevOps 测试教程。

自动化测试是持续集成和持续交付的关键组件,也是向应用添加新功能时扩展 QA 流程的好方法。但是,正如我们将在本指南中看到的那样,使用所谓的探索性测试进行一些手动测试仍然有价值。

查看解决方案

使用 Open DevOps 构建和操作软件

相关资料

DevOps 的自动化测试

不同类型的测试

1. 单元测试

单元测试的级别非常低,接近应用的源代码。它们包括测试软件使用的类、组件或模块的单个方法和功能。通常,单元测试的自动化成本相当低,并且可以由持续集成服务器快速运行。

2. 集成测试

集成测试可验证您的应用使用的不同模块或服务是否可以很好地协同工作。例如,它可以测试与数据库的交互或确保微服务按预期协同工作。这些类型的测试的运行成本更高,因为它们需要应用的多个部分才能启动并运行。

3. 功能测试

功能测试侧重于应用的业务需求。它们仅验证操作的输出,而不在执行该操作时检查系统的中间状态。

集成测试和功能测试之间有时会出现混淆,因为它们都需要多个组件才能相互交互。区别在于,集成测试可能只是验证您可以查询数据库,而功能测试则期望从数据库中获得产品要求定义的特定值。

4. 端到端测试

端到端测试在完整的应用环境中复制用户使用软件的行为。它可以验证各种用户流程是否按预期运行,可以像加载网页或登录一样简单,也可以是验证电子邮件通知、在线支付等更复杂的场景...

端到端测试非常有用,但执行起来很昂贵,而且在自动化时可能难以维护。建议进行一些关键的端到端测试,更多地依赖较低级别的测试类型(单元和集成测试),以便能够快速识别重大变更。

5. 验收测试

验收测试是验证系统是否满足其业务要求的正式测试。它们要求测试期间整个应用启动并运行,并专注于复制用户行为。但是,他们也可以更进一步,衡量系统的性能,并在某些目标未实现时拒绝变更。

6. 性能测试

性能测试评估系统在特定工作负载下的性能。这些测试有助于衡量应用的可靠性、速度、可扩展性和响应能力。例如,它可以在执行大量请求时观察响应时间,也可以查看系统在处理大量数据时的行为。它可以确定应用是否满足性能要求、定位瓶颈、测量峰值流量期间的稳定性等等。

7. 烟雾测试

烟雾测试是检查应用基本功能的基本测试。它们旨在快速执行,其目标是向您保证系统的主要功能按预期运行。

烟雾测试可以在创建新版本后立即用于决定是否可以运行更昂贵的测试,或者在部署之后立即使用,以确保应用在新部署的环境中正常运行。

如何自动进行测试

要自动执行测试,首先需要使用适合您应用的测试框架以编程方式编写测试。PHPUnit、Mocha、RSpec 是测试框架的示例,您可以分别用于 PHP、Javascript 和 Ruby。每种语言都有许多选择,因此您可能需要做一些研究,并要求开发人员社区找出最适合您的框架。

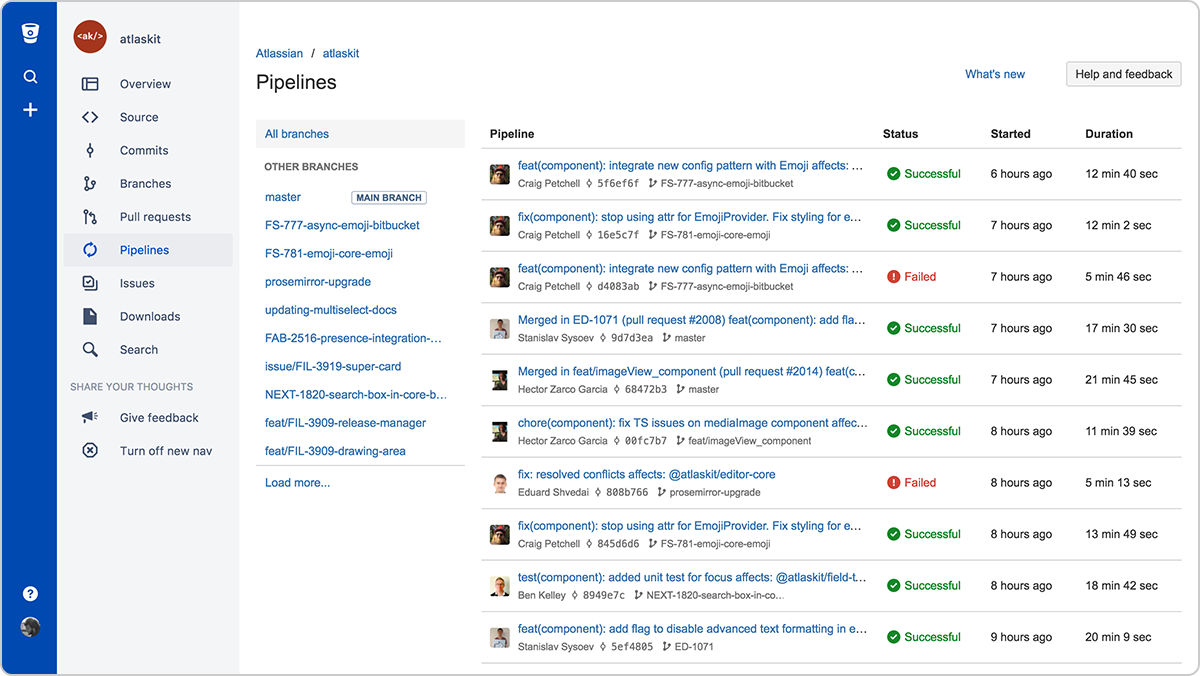

当您的测试可以通过终端的脚本执行时,您可以让持续集成服务器(如 Bamboo)自动执行测试,也可以使用像 Bitbucket Pipelines 这样的云服务。这些工具将监视您的存储库,并在向主存储库推送新变更时执行您的测试套件。

探索性测试

代码中包含的功能和改进越多,为确保所有系统正常运行而需要进行测试的次数就越多。然后,对于您修复的每个错误,明智的做法是确认它们不会在较新的版本中恢复。自动化是实现这一目标的关键,编写测试迟早会成为您开发工作流程的一部分。

所以问题是,是否还值得进行手动测试?简短的答案是肯定的,最好进行探索性测试以发现不明显的错误。

探索性测试会话不应超过两个小时,并且应有明确的范围,以帮助测试人员专注于软件的特定领域。一旦所有测试人员都听取了简报,他们应使用各种操作来检查系统的运行情况。

关于测试的注意事项

要完成本指南,重要的是要谈谈测试的目标。虽然测试用户是否可以真的使用您的应用(他们可以登录并保存一个对象)很重要,但同样重要的是测试应用在执行错误数据或意外操作时不会中断。您需要预测当用户输入错字、尝试保存不完整的表单或使用错误的 API 时会发生什么。您需要检查是否有人可以轻易损害数据,能够访问他们不应该访问的资源。一个好的测试套件应该尝试打破您的应用并帮助理解它的局限性。

最后,测试也是代码!因此,在代码审查期间不要忘记它们,因为它们可能是生产的最后一道防线。

Atlassian 的 Open DevOps 还提供了一个开放的工具链平台,允许您使用自己喜欢的工具构建基于 CD 的开发管道。通过我们的 DevOps 测试教程了解 Atlassian 和第三方工具如何将测试集成到您的工作流程中。

分享此文章

下一主题

推荐阅读

将这些资源加入书签,以了解 DevOps 团队的类型,或获取 Atlassian 关于 DevOps 的持续更新。

DevOps 社区

阅读博客文章