持续交付管道基础知识

了解如何将自动构建、测试和部署链接到一个发布工作流程中。

Juni Mukherjee

开发人员推广人员

什么是持续交付管道?

持续交付管道是一系列用于交付新软件的自动化流程。它是持续模式的实现,将自动构建、测试和部署作为一个发布工作流程进行编排。简而言之,CD 管道是您的代码变更进入生产环境需经历的一系列步骤。

CD 管道根据业务需求,以自动化方式频繁且可预测地提供从测试到暂存再到生产的优质产品。

首先,我们来重点谈谈三个概念:质量、频率和可预测性。

我们强调质量是为了强调不能为了速度而牺牲质量。最好不要构建可以将错误代码高速传输到生产环境的管道。我们将介绍“左移”和“DevSecOps”原则,并讨论如何将质量和安全性推向软件开发生命周期 (SDLC) 的上游。这将消除关于持续交付管道对企业构成风险的任何担忧。

频率表示管道随时执行以发布功能,因为它们编程为在向代码库提交时触发。管道 MVP(最简可行产品)到位后,它可以根据需要执行任意次数,但需要定期维护成本。这种自动化方法可以在不耗尽团队精力的情况下进行扩展。这也使团队能够对他们的产品进行微小的渐进改进,而不必担心生产中会发生重大灾难。

查看解决方案

使用 Open DevOps 构建和操作软件

相关资料

什么是 DevOps 管道?

尽管您可能已经听过很多次了,但“人非圣贤孰能无过”仍然是正确的。团队为手动发布期间的影响做好准备,因为这些流程很脆弱。可预测性意味着,当通过持续交付管道进行发布时,发布本质上是确定性的。由于管道是可编程的基础架构,因此团队每次都能预期所需的行为。当然,事件是不可避免的,因为是软件就会有错误。但是,管道比容易出错的手动发布流程要好得多,因为与人不同,管道不会在临近截止时间时犹豫。

管道有软件闸门,可以自动提升或拒绝版本控制的构件通过。如果未能遵守发布协议,则软件闸门将保持关闭状态,管道将中止。系统会生成警报并将通知发送到分发名单,该名单中包含可能中断管道的团队成员。

CD 管道就是这样工作的:每次管道成功运行时,提交或一小批增量提交都会进入生产环境。最终,团队以安全且可审计的方式发布功能并最终发布产品。

持续交付管道中的各个阶段

流经管道的产品的架构是决定持续交付管道结构的关键因素。高度耦合的产品架构生成复杂的图形管道模式,在最终投入生产之前,各种管道可能会纠缠在一起。

产品架构还会影响管道的不同阶段以及每个阶段产生的构件。我们来讨论持续交付的四个常见阶段:

即使您预计组织将超过四个阶段或少于四个阶段,下方概述的概念仍然适用。

一个常见的误解是,这些阶段在您的管道中会有物理表现。他们不必这样做。这些是逻辑阶段,可以映射到环境里程碑,例如测试、暂存和生产。例如,可以在测试中构建、测试和部署组件和子系统。子系统或系统可以在暂存中组装、测试和部署。子系统或系统可以作为生产阶段的一部分推广到生产。

在测试中发现缺陷的成本很低,在暂存中发现缺陷的成本中等,在生产中发现缺陷的成本很高。“左移”是指在流程的较早阶段撤回验证。如今,从测试到暂存的闸门内置的防御技巧要多得多,因此暂存不必再看起来像犯罪现场了!

从历史上看,InfoSec 是在软件开发生命周期末尾出现的,那时被拒绝的版本可能会对公司构成网络安全威胁。尽管这些意图是好的,但却导致了挫折和拖延。“DevSecOps”主张从设计阶段就将安全性内置到产品中,而不是发送(可能不安全的)成品进行评估。

我们仔细看看如何在持续交付工作流程中解决“左移”和 “DevSecOps” 的问题。在下一节中,我们将详细讨论每个阶段。

CD 组件阶段

管道首先构建组件,即产品中最小的可分发和可测试单元。例如,管道构建的库可以称为组件。组件可以通过代码审查、单元测试和静态代码分析器等方式进行认证。

代码审查对于团队对产品上线所需的功能、测试和基础架构有共同的了解非常重要。第二双眼睛通常可以创造奇迹。多年来,我们可能会认为错误代码不再是不好的代码,从而对错误代码产生了免疫。新的视角可以迫使我们重新审视这些弱点,并在需要时直接重构它们。

单元测试几乎一直是我们在代码上运行的第一组软件测试。他们不接触数据库或网络。代码覆盖率是单元测试所涉及的代码的百分比。测量覆盖率的方法有很多,例如行覆盖率、类别覆盖率、方法覆盖率等。

虽然良好的代码覆盖率可以简化重构,但不利于强制实现高覆盖率目标。与直觉相反,一些代码覆盖率较高的团队比代码覆盖率较低的团队的生产中断次数更多。另外,请记住,控制覆盖率数值很容易。在巨大的压力下,尤其是在性能审查期间,开发人员可以恢复不公平的实践来增加代码覆盖率。我不会在这里详细介绍!

静态代码分析无需执行即可检测代码中的问题,这是一种检测问题的实惠方法。与单元测试一样,这些测试在源代码上运行并且运行时间很短。静态分析器可以检测潜在的内存泄漏以及代码质量指标,例如循环复杂性和代码重复。在此阶段,静态分析安全测试 (SAST) 是发现安全漏洞的有效方法。

定义控制软件闸门和影响代码推广的指标,从组件阶段到子系统阶段。

CD 子系统阶段

松散耦合的组件构成了子系统——最小的可部署和可运行单元。例如,服务器是一个子系统,容器中运行的微服务也是子系统的示例。与组件不同,子系统可以根据客户用例建立和验证。

就像 Node.js 用户界面和 Java API 层是子系统一样,数据库也是子系统。在某些组织中,虽然已经出现了自动化数据库变更管理并成功地持续交付数据库的新一代工具,但 RDBMS(关系数据库管理系统)是手动处理的。涉及 NoSQL 数据库的 CD 管道比 RDBMS 更容易实现。

子系统可以通过功能、性能和安全测试进行部署和认证。我们来研究一下每种测试类型是如何验证产品的。

功能测试包括所有涉及国际化 (I18N)、本地化 (L10N)、数据质量、访问权限、负面场景等的客户用例。这些测试可确保您的产品功能符合客户的期望,具有包容性,并为其目标市场提供服务。

与产品负责人一起确定您的性能基准。将您的性能测试与管道集成,并使用基准来测试管道是通过还是失败。一个常见的误解是,性能测试不需要与持续交付管道集成,但是,这打破了持续范式。

近来,主要组织遭到入侵,网络安全威胁最大。我们需要保证安全,确保我们的产品中没有安全漏洞,无论是在我们编写的代码中,还是导入到代码的第三方库中。事实上,已经在 OSS(开源软件)中发现了重大漏洞,我们应该使用工具和技术来标记这些错误并迫使管道中止。DAST(动态分析安全测试)是一种发现安全漏洞的有效方法。

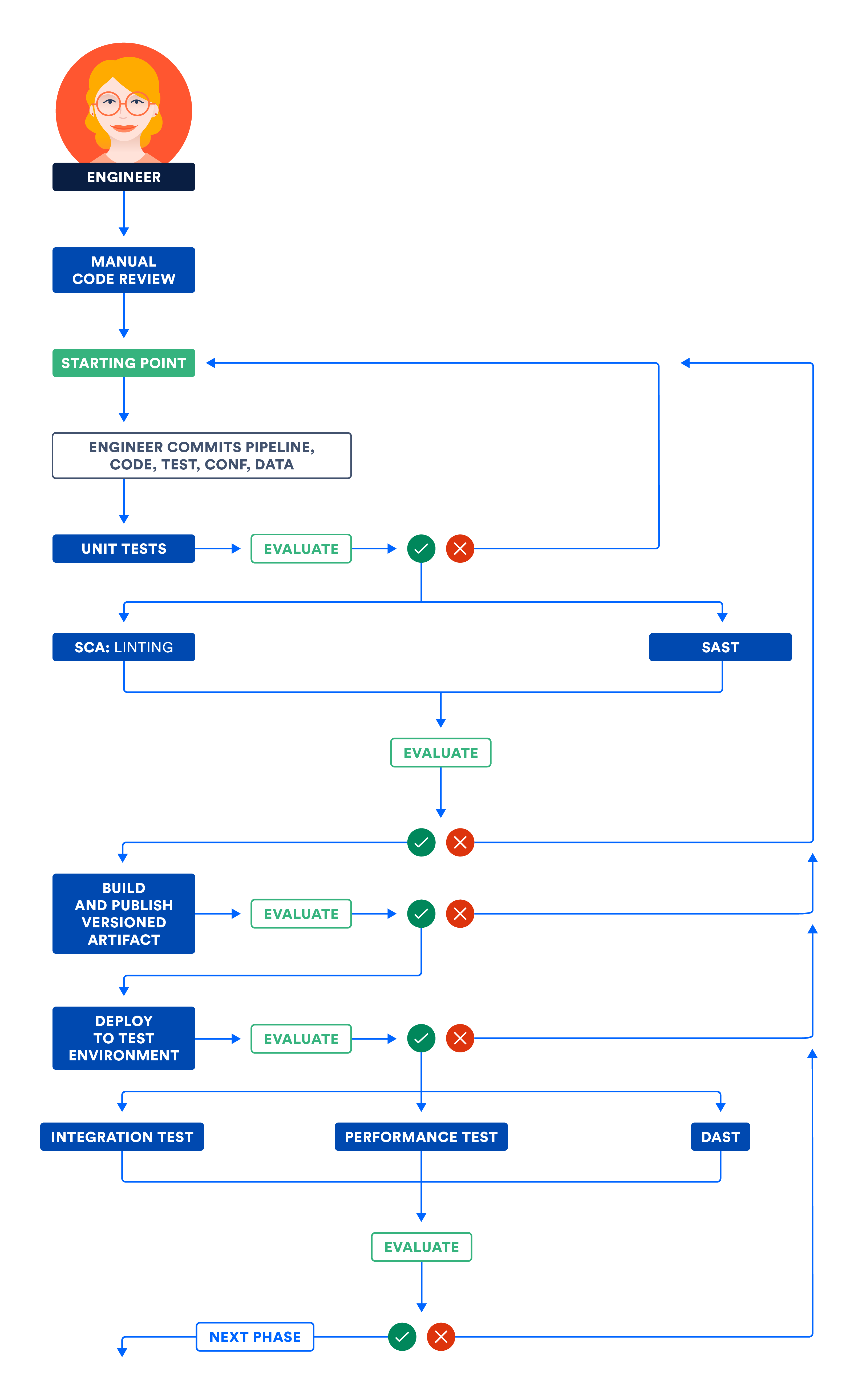

下图阐述了组件和子系统阶段中讨论的工作流程。并行运行独立步骤,以优化管道的总执行时间并获得快速反馈。

A) 认证测试环境中的组件和/或子系统

CD 系统阶段

一旦子系统满足功能、性能和安全预期,就可以教导管道在整个系统整体发布时使用松散耦合的子系统组装系统。这意味着最快的团队可以以最慢的团队的速度推进。这让我想起了那句老话:“最薄弱的一环决定了整个链条的强度”。

我们建议不要使用这种组合反模式,将子系统组成一个系统作为一个整体发布。这种反模式将所有子系统绑定在一起以取得成功。如果您投资购买可独立部署的构件,您将能够避免这种反模式。

如果需要对系统进行整体验证,则可以通过集成、性能和安全测试对其进行认证。与子系统阶段不同,在此阶段测试期间不要使用模拟或存根。此外,将重点放在测试接口和网络上,这一点比其他任何事情都重要。

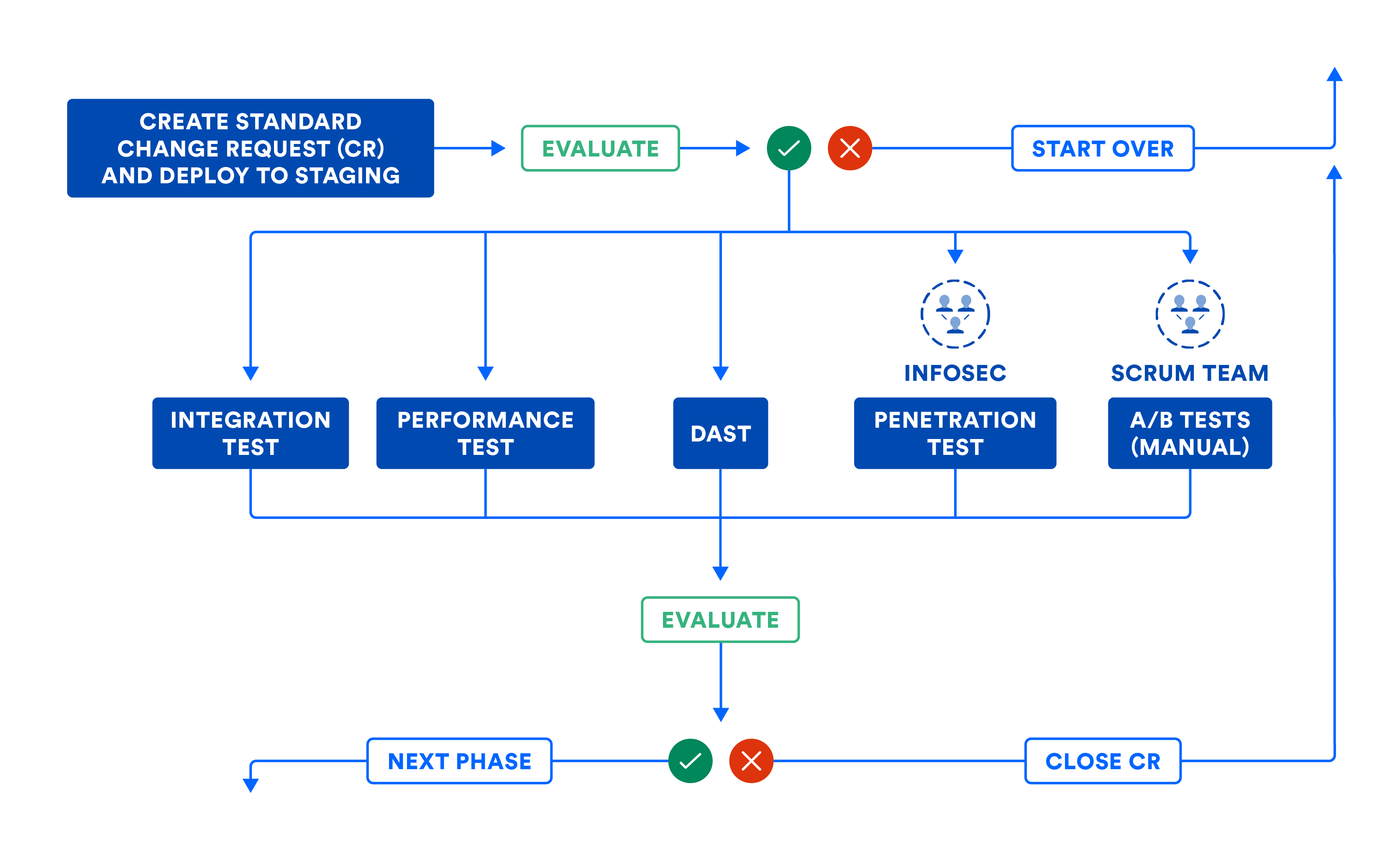

下图总结了系统阶段的工作流程,以防您必须使用组合来组装子系统。即使您可以将子系统投入生产,下图也有助于建立将代码从此阶段提升到下一个阶段所需的软件闸门。

管道可以自动提交变更请求 (CR) 以留下审计记录。大多数组织使用此工作流程进行标准变更,这意味着计划发布。此工作流程还应用于紧急变更或计划外发布,尽管有些团队往往会偷工减料。请注意,当错误迫使变更请求中止时,CD 管道是如何自动关闭变更请求的。这样可以防止变更请求在管道工作流程的中间被放弃。

下图阐述了 CD 系统阶段讨论的工作流程。请注意,某些步骤可能涉及人为干预,这些手动步骤可以作为管道中手动闸门的一部分执行。完整映射后,管道可视化与产品发布的价值流映射非常相似!

B) 认证暂存环境中的子系统和/或系统

组装后的系统通过认证后,保持组件不变,然后将其投入生产。

CD 生产阶段

无论子系统可以独立部署还是组装到系统中,经过版本控制的构件都将作为最后阶段的一部分部署到生产环境中。

零停机期间部署 (ZDD) 可防止客户停机期间,应在从测试到试运行再到生产的各个环节进行实践。蓝绿部署是一种流行的 ZDD 技术,新位部署到一小部分用户(称为“绿色”),而大部分则完全没有意识到具有旧位的“蓝色”。最后,让所有人恢复到“蓝色”状态,并且只有少数客户会受到影响(如果有的话)。如果“绿色”版本运行看起来不错,请将所有人从“蓝色”缓慢迁移到“绿色”。

但是,我看到某些组织滥用了手动闸门系统。他们要求团队在变更批准委员会 (CAB) 会议上获得手动批准。原因往往是对职责分离或问题分离的错误解释。一个部门交接给另一个部门寻求批准,然后才能向前推进。我还看到一些 CAB 批准者对生产变更表现出浅薄的技术理解,因此使手动批准过程变得缓慢而沉闷。

这是阐明持续交付和持续部署之间区别的好方法。持续交付允许手动闸门,而持续部署不允许。虽然两者都被称为 CD,但持续部署需要更多的纪律和严格,因为管道中没有人为干预。

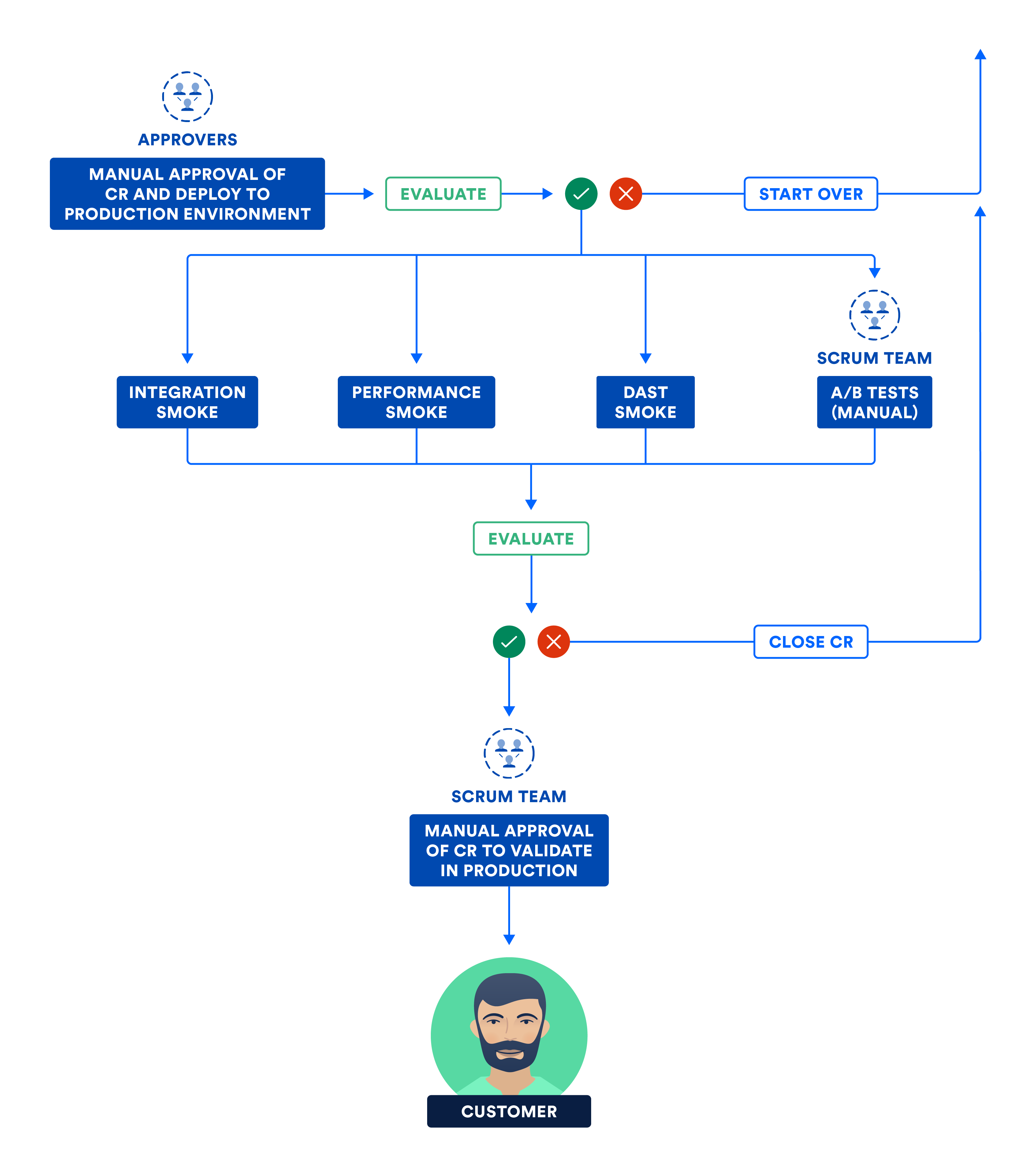

移动位和开启位是有区别的。在生产环境中运行冒烟测试,这是集成、性能和安全测试套件的子集。冒烟测试通过后,位会开启,产品上线给客户!

下图说明了团队在持续交付的最后阶段所执行的步骤。

C) 认证生产环境中的子系统和/或系统

持续交付是新常态

要成功持续交付或持续部署,做好持续集成和持续测试至关重要。有了坚实的基础,您将在所有三个方面获胜:质量、频率和可预测性。

持续交付管道可通过一系列可持续实验帮助您的想法变为产品。如果您发现自己的想法没有想象的那么好,您可以迅速想出更好的主意。此外,管道可以缩短生产问题的平均解决时间 (MTTR),从而减少客户的停机期间。通过持续交付,您最终会拥有高效的团队和满意的客户,谁不想要呢?

在我们的持续交付教程中了解更多信息。

分享这篇文章

下一个主题

推荐阅读

将这些资源加入书签,以了解 DevOps 团队的类型,或获取 Atlassian 关于 DevOps 的持续更新。

DevOps 社区

阅读博客文章