What does a Product Growth engineer work on?

Atlassian has many different software development teams within our Engineering organization. Some teams focus on products such as Jira, Confluence, or Bitbucket. Many others work on platform-focused capabilities or infrastructure shared between products.

In this blog post, we’re going to focus on the Growth team at Atlassian. We’ll explain how the Growth team operates using methods such as A/B testing, and what it means to be a Growth Engineer. We’ll also talk through an example experiment that Growth Engineers built inside of Jira.

Growth vs Product vs Marketing

One of the frequent questions asked about the Growth team is “How is a Growth team different from Marketing or Product teams?“ The below chart helps show some of these differences.

Many Atlassian teams would be classified as “Product” or “Platform“ teams. Let’s explore some of the differences between Product and Growth teams.

The difference between Product and Growth teams

Growth and Product teams may have different yet complementary goals in mind. Product engineering teams are often focused on creating and improving a core product offering. Each team works on strategic bets that are driven by product requirements. These bets are based on customer demand and the market. There is a set backlog of expected product changes. Product engineering teams focus on a mix of delivery, engineering, and operational improvements.

In contrast, Growth teams focus on incremental learning and opportunity discovery. Growth teams aim to discover and unlock customer value in existing product offerings.

Product teams may be answering questions like “What product do we need to build to meet the market?,” “What are the customer’s needs?,“ or even as simple as “What feature should we deliver next given our strategy?“ The focus for Growth is on answering questions like “Why aren’t users sticking around?” and “What core Product A features do users struggle to discover, and what’s the remedy?”

Product engineering teams look to build a product for the market fit. Growth engineering teams aim to learn how current products are being used. They maintain an unrelenting focus on the health of the user journey to prevent it from degrading. They look into the holistic user journey outside and between products. They find opportunities to have a measurable product impact there. Then they make incremental changes to unlock them.

Growth teams may focus on different aspects of the user journey. Some Growth teams work on increasing a user base and product adoption. Other teams work to simplify products and help guide users to discover underutilized product functionality.

Optimizing the user journey can be tricky. Growth teams run experiments that deviate from the existing product experience, then those changes are analyzed to see if they actually make an improvement as expected.

View the world as funnels

How do Growth teams know what to experiment on? To answer that question, teams need to have in-depth knowledge about current user behavior and areas for improvement. One strategy used to learn about usage flows in products is to represent them as funnels.

For example:

How many users visit atlassian.com?

- Of those users, how many view the signup page for a trial of one of our products?

- Of those users, how many actually finish the onboarding for that product?

- and so on…

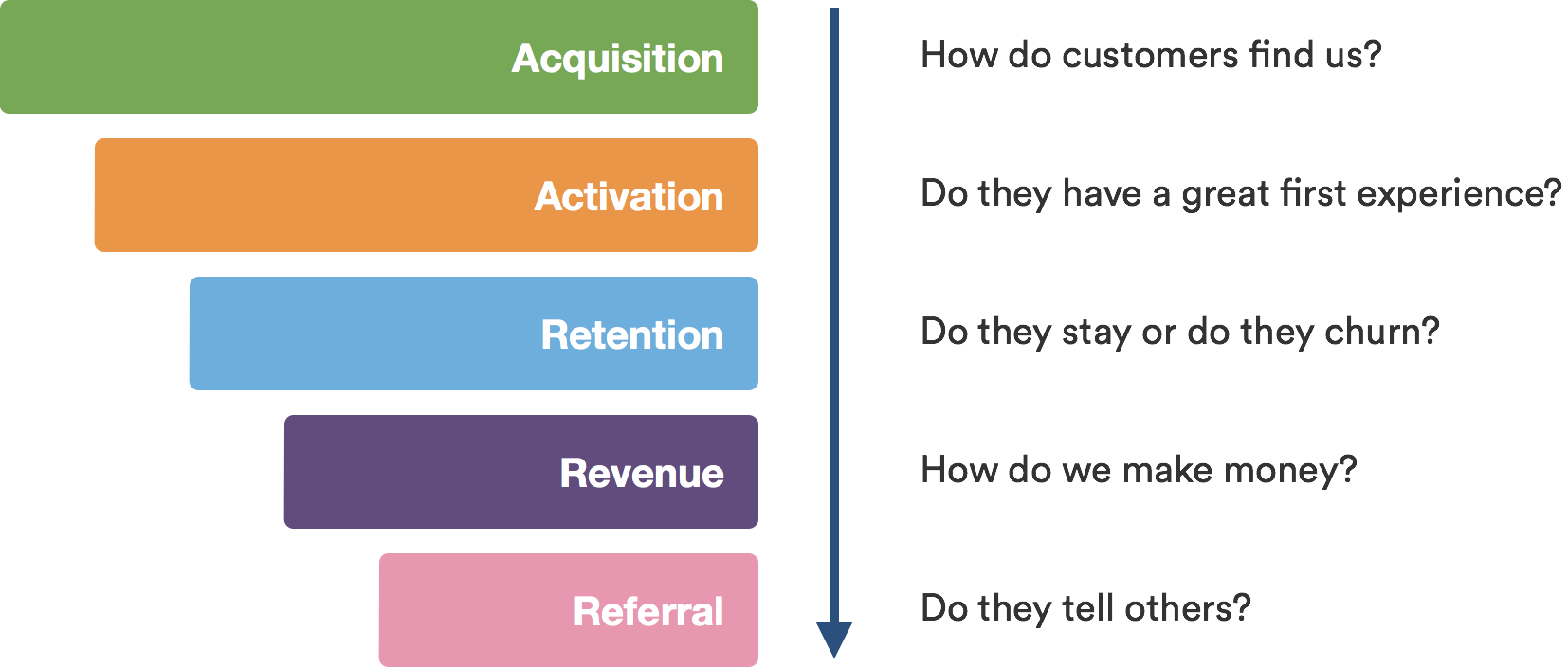

One good funnel framework is known as AARRR, also known as “Pirate metrics.” The chart below describes the framework well.

At Atlassian, a lot of our funnels are complex and involve multiple products – for example, some users may evaluate Jira and Confluence together. Users regularly engage with a suite of Atlassian products and use them together. Growth teams often addresses concerns like product discoverability or usage funnels health. We also have teams that may focus on specific parts of a funnel, for example:

- Activation steps in a funnel. Some teams work on improving the New User Experience on signup and onboarding.

- Referral steps in a funnel. Other teams help make it easier for users to invite their peers to collaborate with them using Atlassian products.

As Growth Engineers, we often work with others to understand and build funnels. Analysts drive the process, while Product Managers, Designers, and Engineers provide feedback. Teams dive into behavior data and build flow charts. Any large drop-offs in the funnel can help locate areas that are ripe for improvement. Those areas then become targets for experimentation and overall product optimization.

All things are good in balance, though. For example, it’s not uncommon to get stuck in analysis paralysis. To mitigate this, we have a process that helps guide our work.

Growth Process

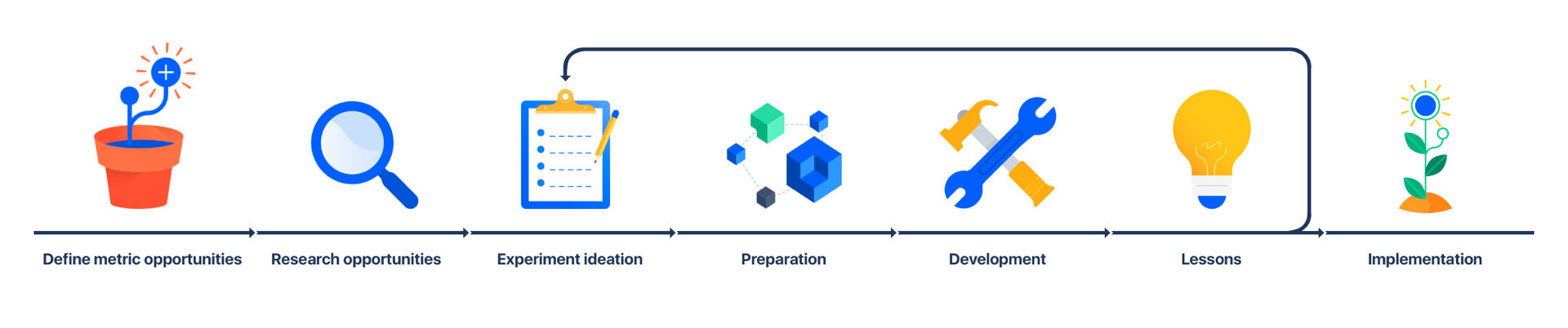

A fundamental tool at our disposal is what we refer to as the Growth Process – a well-defined way of working in Growth.

The goal of following this process is to help teams:

- understand which opportunities will have the greatest impact on their goals.

- generate high-confidence experiment ideas that have a high success rate.

- validate that our ideas have a positive impact using experiments.

- interpret and iterate on experiment results.

As Growth engineers, we engage across all parts of this process from the beginning. We empathize with users and learn about their product usage patterns in depth. Armed with this knowledge, we have a strong say in what is best for the team to deliver.

It’s also important to look at the experience from both a quantitative and qualitative perspective. A quantitative approach involves using hard objective metrics, while a qualitative approach relies on customer interviews and more subjective feedback. Balancing both approaches lifts our confidence that we’re working on the right thing.

We refer to this as being Data Informed rather than Data Driven. We rely on metrics, but also want to have a holistic view of the problem space. This narrows down the scope of work for the team and establishes clear priorities.

Prioritization

There are always too many different opportunities that Growth could be working on. So how do we prioritize?

All ideas are scored and prioritized via frameworks like RICE. Team leadership reviews ideas and prioritizes them using the following criteria:

- feasibility from Engineering perspective

- experience desirability from Design perspective

- business strategy/impact from a Product Manager perspective

- hypothesis strength from an Analytics perspective

We favor a principle of “Progress over Perfection“ where tangible results prevail over extensive delivery.

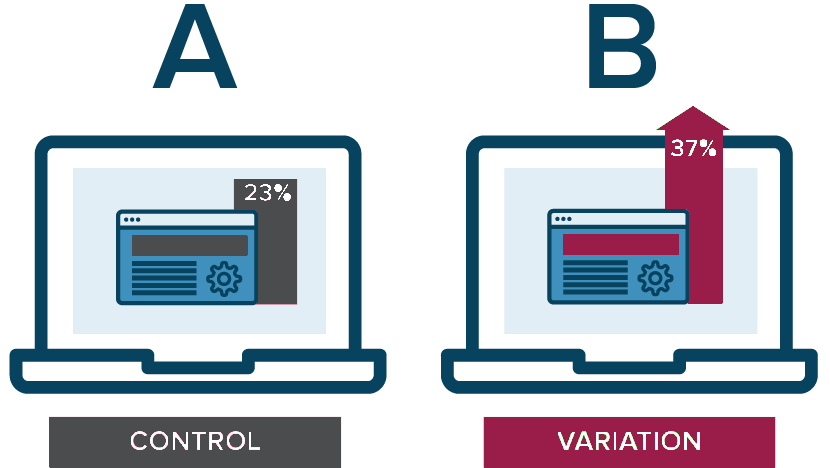

Experimentation through A/B Testing

So, with a backlog of experiment ideas at hand, Growth Engineers get to help make them a reality. The team is able to review or challenge ideas in the backlog before collectively choosing one for execution. Then we build out any necessary UI and backend functionality according to the proposal. The change is then launched as an experiment.

To measure the impact of our changes, we experiment through A/B testing. This allows us to validate new features compared to the existing, standard experience. A control user or traffic cohort (A) is then allocated with no changes applied, while a Variation group (B) gets exposed to a new experience in parallel. We then compare the control group to the variation cohort. Experiments often hope to see greater performance of the variation group B compared to the control group A.

We make these cohorts comparable, so users are only enrolled in either of the cohorts when exposed to the same product flows or screens. We isolate these cohorts to increase our confidence that a positive effect on user behavior and metrics can be attributed to our experiment. This way we also ensure that concurrent product changes are opaque to the experiment.

Experiments can be very rewarding if they succeed. However, not every experiment is going to be successful in improving the customer experience. Growth teams often have to go back to the drawing board to reshape experiments. Sometimes they completely pivot their approach. This means that we can’t become attached to the code we write and be emotional about it. The Progress over Perfection principle applies here. A lot of the code we write may end up being removed from production. But there is a silver lining – the outcome of this process is learning, and that’s what we aim for.

When an experiment is successful, it is extremely satisfying to see the result. We can see customers engaging with the brand new or reshaped feature. There is a clear uplift in the relevant metrics. Customers realize the value of our product thanks to our work. Growth engineers’ work lives or dies by the customer impact it has. We’re never in doubt whether we are making an impact or not.

Growth experimentation projects tend to be short- to medium-term in length; it is rare for a project to span longer than three months. Experimental Features’ scope is typically smaller than that of product or platform teams. Experiments present fewer options for deep technical engineering work. Many experiments are as light-weight as possible. Teams take pride in minimizing the technical changes and scope required. Yet teams never compromise on quality. Growth teams aim to get to the earliest possible signal of a new feature’s performance. This helps in making a decision as early as possible on whether to invest more or pivot and invest time in a different area.

Once in a while, experiments may unlock a huge opportunity, such as in the example below. In these cases, growth teams can behave more like product teams. Teams start taking on larger projects involving significant engineering efforts and different approaches.

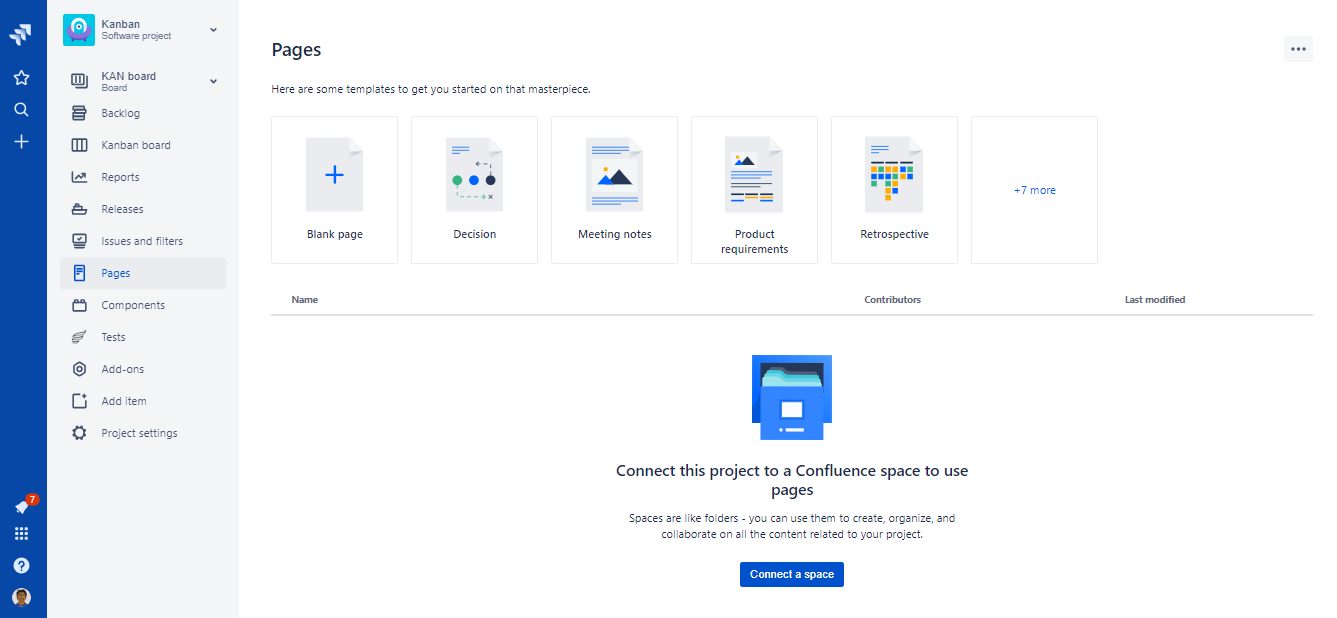

Example of a Growth Engineering experiment: Jira Project Pages

Jira Software Cloud Projects have a feature called Pages. This feature allows customers to link a Confluence Cloud Space to their Jira Project. With Pages, users can see a tree of all the Confluence space content in Jira. That way, users don’t have to switch between the products to see both.

Read more here: https://confluence.atlassian.com/cloudkb/the-new-pages-feature-in-jira-software-project-958770525.html

The first version of Pages was an experimental feature built by the Growth team. What did the team do?

The team started with an analysis of current Confluence and Jira usage patterns and noticed a few opportunities to improve. Before investing too much in the feature, the team proposed a few A/B experiments to determine customer interest in a Pages-like feature. This involved close collaboration with both product engineering teams on the integration.

Having received positive results from the experiments, the Growth team continued to invest in building out Pages. Continuing to work in partnership with both Jira and Confluence, the project went ahead. The final A/B test involved the team enrolling every Jira customer in the experiment. 50 percent of users were kept as a control, with the other 50 percent exposed to a final version of the Pages feature. After deeming the experiment successful, the team allowed 100 percent of Jira customers to discover Confluence via Pages. You can see below an example chart from the experiment analysis showing the positive impact on Confluence Week 1 WAU (Weekly Active Users).

Joint usage of products is a common focus area for the Atlassian Growth team. Connecting products and surfacing integrations makes life easier for our users!

Collaboration

Growth often needs to refresh our understanding of funnels across the business. We seek to work on the greatest opportunities for improvement. Doing so means partnering closely with many other teams within Atlassian, as seen in the above example. Collaboration is part of the lifeblood of Growth.

As part of this collaboration, it is very common to work on code bases owned and maintained by other teams. As Growth engineers, we expose ourselves to variousdifferent tech stacks, architectures and development styles. We are always learning, and variety is never lacking. However, this does mean that we need to take additional precautions when working on projects and adjust to changes often.

The big three collaboration pillars that we’ve identified in the Growth team are:

- Clear accountability: Agree who needs to do what by when. Leave no room for ambiguity.

- Frequent communication: Stay connected with your teammates and dependencies, especially when remote.

- Find ways around roadblocks: Don’t wait for things to come to you. Problem solve as a team with urgency.

So, what do Growth engineers work on?

Every day, we seize the largest opportunities in Atlassian products. Growth teams accelerate the broader mission of Atlassian to unleash the potential of every team.

We look to prove the customer value of any new optimization ideas, as early as possible. We partner with Product engineering teams to experiment, deliver, and share insights. We focus on customer impact, and we intimately know the difference that we make.

Growth teams work on improving the customer experience in products. To do so, we acquire a deep understanding of product user behavior. We understand Atlassian customer pain points well and engage in both quantitative and qualitative research.

We favor progress over perfection. Growth teams deliver compound improvements over time. We validate improvements through rigorous experimentation and A/B testing. We work on small experiments as well as larger projects.

We partner and collaborate with Product engineering teams. We communicate with other Atlassian teams a lot. We work on other’s code bases while closely partnering with product mangers, designers and other engineers.