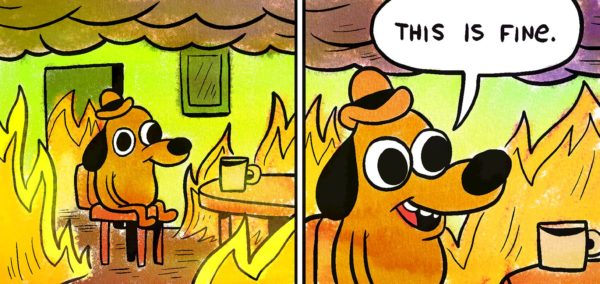

One of my favourite memes for the last few years is This is Fine. It’s a picture of a dog sitting in a burning building with a cup of coffee. “This is fine,” the dog announces. “I’m okay with what’s happening. It’ll all turn out okay.” Then the dog takes a sip of coffee and melts into the fire.

Working in ethics and technology, I hear a lot of “This is fine.” The tech sector has built (and is building) processes and systems that exclude vulnerable users by designing “nudges” that influence users, users who end up making privacy concessions they probably shouldn’t. Or, designing by hardwiring preconceived notions of right and wrong into technologies that will shape millions of people’s lives.

But many won’t acknowledge they could have ethics problems.

This is partly because, like the dog, they don’t concede that the fire might actually burn them in the end. Lots of people working in tech are willing to admit that someone else has a problem with ethics, but they’re less likely to believe is that they themselves have an issue with ethics.

And I get it. Many times, people are building products that seem innocuous, fun, or practical. There’s nothing in there that makes us do a moral double-take.

The problem is, of course, that just because you’re not able to identify a problem doesn’t mean you won’t melt to death in the sixth frame of the comic. And there are issues you need to address in what you’re building, because tech design is a human activity, and there’s no such thing as human behavior without ethics.

Your product probably already has ethical issues

To put it bluntly: if you think you don’t need to consider ethics in your design process because your product doesn’t generate any ethical issues, you’ve missed something. Maybe your product is still fine, but you can’t be sure unless you’ve taken the time to consider your product and stakeholders through an ethical lens.

Look at it this way: If you haven’t made sure there are no bugs or biases in your design, you haven’t been the best designer you could be. Ethics is no different – making people (and their products) the best they can be.

Take Pokémon Go, for example. It’s an awesome little mobile game that gives users the chance to feel like Pokémon trainers in the real world. And it’s a business success story, recording a profit of $3 billion at the end of 2018. But it’s exactly the kind of innocuous-seeming app most would think doesn’t have any ethical issues.

But it does. It distracted drivers, brought users to dangerous locations in the hopes of catching Pokémon, disrupted public infrastructure, didn’t seek the consent of the sites it included in the game, unintentionally excluded rural neighbourhoods (many populated by racial minorities), and released Pokémon in offensive locations (for instance, a poison gas Pokémon in the Holocaust Museum in Washington DC).

Quite a list, actually.

This is a shame, because all of this meant that Pokemon Go was not the best game it could be. And as designers, that’s the goal – to make something great. But something can’t be great unless it’s good, and that’s why designers need to think about ethics.

Here are a few things you can embed within your design processes to make sure you’re not going to burn to death, ethically speaking, when you finally launch.

1. Start with ethical premortems

When something goes wrong with a product, we know it’s important to do a postmortem to make sure we don’t repeat the same mistakes. Postmortems happen all the time in ethics. A product is launched, a scandal erupts, and ethicists wind up as talking heads on the news discussing what went wrong.

As useful as postmortems are, they can also be ways of washing over negligent practices. When something goes wrong and a spokesperson says, “We’re going to look closely at what happened to make sure it doesn’t happen again.” I want to say, “Why didn’t you do that before you launched?” That’s what an ethical premortem does.

Sit down with your team and talk about what would make this product an ethical failure. Then work backwards to the root causes of that possible failure. How could you mitigate that risk? Can you reduce the risk enough to justify going forward with the project? Are your systems, processes and teams set up in a way that enables ethical issues to be identified and addressed?

Tech ethicist Shannon Vallor provides a list of handy premortem questions:

- How Could This Project Fail for Ethical Reasons?

- What Would be the Most Likely Combined Causes of Our Ethical Failure/Disaster?

- What Blind Spots Would Lead Us Into It?

- Why Would We Fail to Act?

- Why/How Would We Choose the Wrong Action?

- What Systems/Processes/Checks/Failsafes Can We Put in Place to Reduce Failure Risk?

2. Ask the Death Star question

The book Rogue One: Catalyst tells the story of how the galactic empire managed to build the Death Star. The strategy was simple: take many subject matter experts and get them working in silos on small projects. With no team aware of what other teams were doing, only a few managers could make sense of what was actually being built.

Small teams, working in a limited role on a much larger project, with limited connection to the needs, goals, objectives or activities of other teams. Sound familiar? Siloing is a major source of ethical negligence. Teams whose workloads, incentives, and interests are limited to their particular contribution seldom can identify the downstream effects of their contribution, or what might happen when it’s combined with other work.

While it’s unlikely you’re secretly working for a Sith Lord, it’s still worth asking:

- What’s the big picture here? What am I actually helping to build?

- What contribution is my work making and are there ethical risks I might need to know about?

- Are there dual-use risks in this product that I should be designing against?

- If there are risks, are they worth it, given the potential benefits?

3. Get red teaming

Anyone who has worked in security will know that one of the best ways to know if a product is secure is to ask someone else to try to break it. We can use a similar concept for ethics. Once we’ve built something we think is great, ask some people to try to prove that it isn’t.

The goal of red teaming is to create impartiality and objectivity. It’s hard to see the flaws in a system we’ve been close to for a long time – especially if we’re proud of it. That’s why it’s not unheard of for new employees to identify flaws, bugs, or inefficiencies in their first day on the job. By asking a group who weren’t involved in the design to critique it through a specifically ethical lens, we minimise the risk of ethical negligence.

Red teams should ask:

- What are the ethical pressure points here?

- Have you made trade-offs between competing values/ideals? If so, have you made them in the right way?

- What happens if we widen the circle of possible users to include some people you may not have considered?

- Was this project one we should have taken on at all? (If you knew you were building the Death Star, it’s unlikely you could ever make it an ethical product. It’s a WMD.)

- Is your solution the only one? Is it the best one?

4. Decide what your product’s saying

Ever seen a toddler discover a new toy? Their first instinct is to test the limits of what they can do. They’re not asking What was the intention of the designer, they’re testing how the item can satisfy their needs, whatever they may be. In this case they chew it, throw it, paint with it, push it down a slide… a toddler can’t access the designer’s intention. The only prompts they have are those built into the product itself.

It’s easy to think about our products as though they’ll only be used in the way we want them to be used. In reality, though, technology design and usage is more like a two-way conversation than a set of instructions. Given this, it’s worth asking: if the user had no instructions on how to use this product, what would they infer purely from the design?

For example, we might infer from the hidden-away nature of some privacy settings on social media platforms that we shouldn’t tweak our privacy settings. Social platforms might say otherwise, but their design tells a different story. Imagine what your product would be saying to a user if you let it speak for itself.

This is doubly important, because your design is saying something. All technology is full of affordances – subtle prompts that invite the user to engage with it in some ways rather than others. They’re there whether you intend them to be or not, but if you’re not aware of what your design affords, you can’t know what messages the user might be receiving.

Design teams should ask:

- What could a infer from the design about how a product can/should be used?

- How do you want people to use this?

- How don’t you want people to use this?

- Do your design choices and affordances reflect these expectations?

- Are you unnecessarily preventing other legitimate uses of the technology?

5. Don’t forget to show your work

One of the (few) things I remember from my high school math classes is this: you get one mark for getting the right answer, but three marks for showing the working that led you there.

It’s also important for learning: if you don’t get the right answer, being able to interrogate your process is crucial (that’s what a post-mortem is).

For ethical design, the process of showing your work is about being willing to publicly defend the ethical decisions you’ve made. It’s a practical version of The Sunlight Test – where you test your intentions by asking if you’d do what you were doing if the whole world was watching.

Ask yourself (and your team):

- Are there any limitations to this product?

- What trade-offs have you made (e.g. between privacy and user-customisation)?

- Why did you build this product (what problems are you solving?)

- Does this product risk being misused? If so, what have you done to mitigate those risks?

- Are there any users who will have trouble using this product (for instance, people with disabilities)? If so, why can’t you fix this and why is it worth releasing the product, given it’s not universally accessible?

- How probable is it that the good and bad effects are likely to happen?

Ethics is an investment

I’m constantly amazed at how much money, time and personnel organizations are willing to invest in culture initiatives, wellbeing days and the like, but who haven’t spent a penny on ethics. There’s a general sense that if you’re a good person, then you’ll build ethical stuff, but the evidence overwhelmingly proves that’s not the case. Ethics needs to be something you invest in learning about, building resources and systems around, recruiting for, and incentivizing.

It’s also something that needs to be engaged in for the right reasons. You can’t go into this process because you think it’s going to make you money or recruit the best people, because you’ll abandon it the second you find a more effective way to achieve those goals. A lot of the talk around ethics in technology at the moment has a particular flavour: anti-regulation. There is a hope that if companies are ethical, they can self-regulate.

I don’t see that as the role of ethics at all. Ethics can guide us toward making the best judgements about what’s right and what’s wrong. It can give us precision in our decisions, a language to explain why something is a problem, and a way of determining when something is truly excellent. But people also need justice: something to rely on if they’re the least powerful person in the room. Ethics has something to say here, but so do law and regulation.

If your organisation says they’re taking ethics seriously, ask them how open they are to accepting restraint and accountability. How much are they willing to invest in getting the systems right? Are they willing to sack their best performer if that person isn’t conducting themselves the way they should?