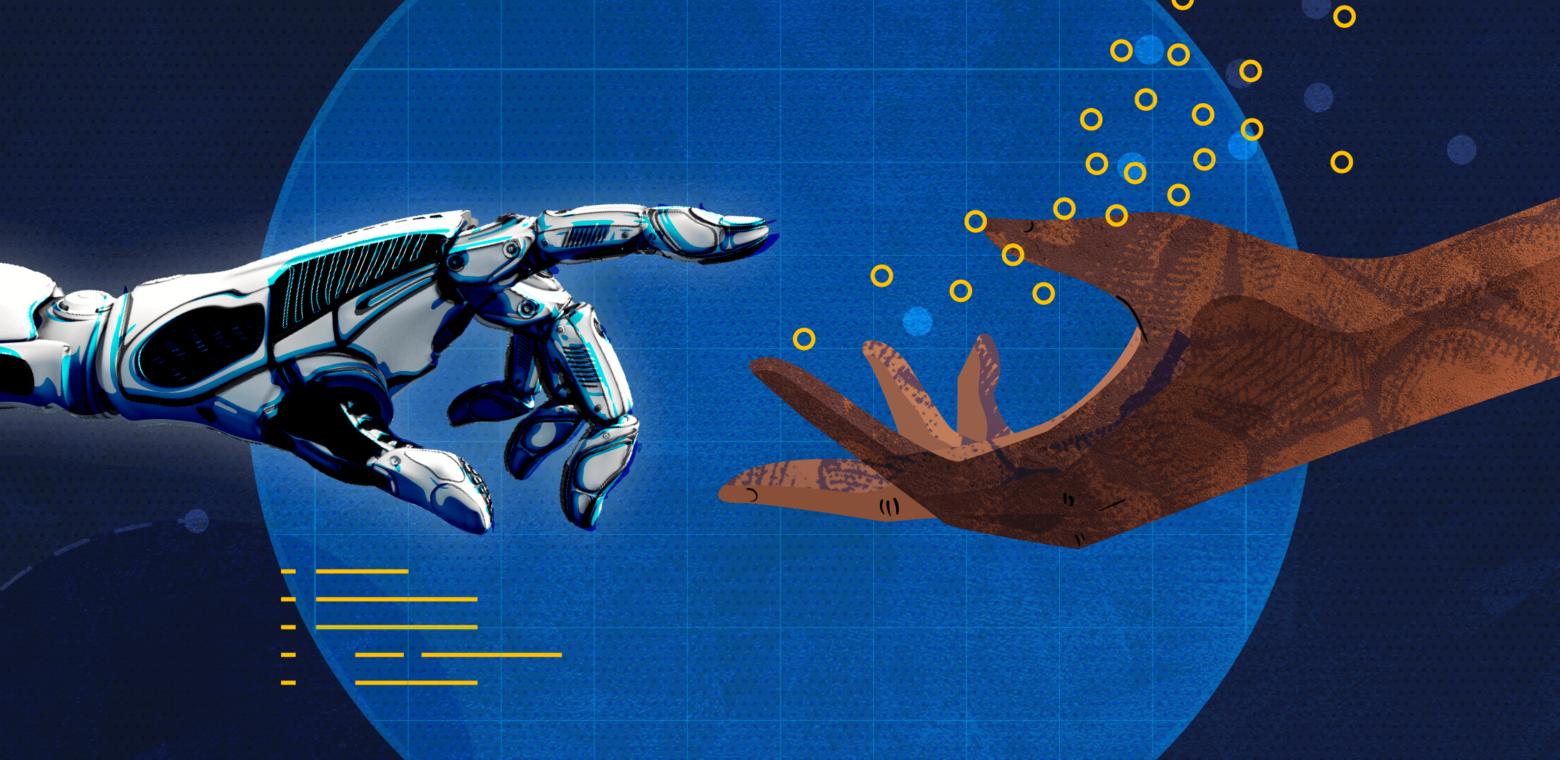

Unless you build or program computers for a living, you could be forgiven for never having wondered what actually happens under the hood. How do computers make decisions? What do their instructions look like? Can they, y’know… think?

The short answer is no. Computers can’t think the way you and I can. At least, not yet. But artificial intelligence technology (AI) is getting closer every day, raising fundamental questions about ethics, design, and what it means to be alive. In his book, How To Speak Machine, designer and technologist John Maeda acknowledges the concerns many of us have around AI: not only do robots look and sound increasingly lifelike, but they respond to input so quickly and have such stamina that we humans start to feel threatened. Nobody likes the idea of being outpaced (or replaced) by their own creation.

But should we be worried? Maeda, an AI enthusiast with the hands-on experience to back it up, thinks not. “You’ll never become completely expendable if you’re always outgrowing your own capabilities,” he says. “This is what makes [humans] difficult to copy.”

With that in mind, I asked him five questions that explore the difference between how humans and computers process information, and what it means for the future of AI.

This interview has been edited for clarity.

1: Are the robots really coming to take our jobs?

They already have. Think about dishwashers. That’s a machine that took away a lot of human work not just in the home, but in restaurants, cafeterias, and other industrial kitchen facilities. Think about bank tellers. We used to have to talk to a human to take money out of the bank, now we don’t. Think about customer support lines that use robotic telephony. So, yeah. I think robots are always taking over our jobs.

There was an article in The Guardian that showed how a writing algorithm can produce something that looks like it was written by one of us. And it’s disturbing. But it’s less disturbing when you think about it in the context of something unoriginal, like a press release for a sofa. There are only so many ways you can write that – “Well, it’s got four legs…” or whatever. There are old problems that workers have been solving for years, which means there’s a data set around it. That kind of work can be easily replaced because machine learning systems love data. Once you have data, you can create a repeatable pattern.

For things that don’t have repeatable patterns, we’re always gonna have a job to do. When it comes to something original that no one else has thought about or a personal perspective, AI is bad at that. At least right now.

2: Can AI really spoof humans in a convincing way?

You know how pop radio stations do that thing on the morning talk shows where they’ll call up some random person and pretend they’re a customer service rep or something just to freak them out? That’s a human fooling another human. Or when we have AI create an image of a person’s face that really looks like that person’s face. So, humans can pretend they’re someone else, and machines can do it too. The problem is that machines can do it at scale.

The reason I wrote How to Speak Machine was to show that the scale isn’t just two or three repeatable things. Machines can do things on a scale of millions and millions and millions because they never get tired. So that spoof over the radio of one person calling someone’s house and pretending to cancel the hotel for their wedding… that’s funny. But if every person in the entire world was being impersonated on the phone by a robot, that’s concerning.

What’s eerie is that David Bowie predicted this in a 1999 interview with the BBC, although he was referring to the then-nascent Internet. He said, “Well, it’s going to change the relationship between artists and the audience, and the record label.” He lays out how it’s going to be both amazing and terrifying. And the BBC analyst says, “Oh, it’s just a tool, isn’t it?” and then Bowie goes, “No, no, no. It’s much more than that. It’s an alien life form.”

You could think of AI as another alien life form. And if you understand it, it can do amazing things for you – to a point. It can also do harmful things and, obviously, there are consequences.

3: Speaking of harmful consequences… could a self-driving car really turn homicidal?

The weird thing is that the AI of the past is not the AI of the present. It all changed in 2012. Whereas AI used to be about writing rules, AI now is about feeding it with data and getting it to recognize patterns. The problem with the new AI is that there isn’t a program to read. All you can do is analyze the data it’s been programmed with. Thus, if you create a self-driving car using all kinds of data for different driving behaviors and for some reason you filled it with data from homicidal drivers, it would inherit that behavior.

I’ve been thinking a lot about empathy, and how important it is with regard to AI. But empathy needs to be paired with accountability. I mean, I can feel bad for someone, but if I don’t hold myself accountable for addressing the problem, does it really matter? So the question is: if we create artificial sentience, can we program in accountability? A sentient-like system is basically an amalgam of known behaviors we’ve fed into it. So if the system does something wrong, it’s actually our fault because we programmed it with that behavior.

4: Is it really possible to build AI without baking in our own biases?

Whether you write algorithms or just feed in data, the data set will include the bias of past behaviors. If you write a program, it includes the biases of the engineer. When we write programs, we include statements like “if X, then do Y”. Written like that, it’s easier to see any bias you’re programming into the system.

We can see it in the law, for example. Law is the closest thing we have to a programming language for society, and as we know, laws have tons of bias built in. All human-made things have biases in them. Machines may look like they’re evoking pure logic, but the fact is that we make them. That’s why we have to be careful. As machines evolve and rely more on learning from a data set (vs. from hard-coded programming), we have to make sure we’re accounting for and proactively correcting for bias when giving them data.

5: Can thinking like a machine really help humans solve today’s gnarliest problems?

Computational thinking has four flavors, one of which is decomposition. As I talk about in How To Speak Machine, computers have the capacity to loop through a function or task forever without getting tired. Plus, they log detailed results as they go along.

So for humans, the takeaway here is to decompose a gnarly problem into smaller components. Are there partial solutions you can try over and over, iterating a little bit each time until you land on the right one? What if you simulate isolating and changing one variable at a time? Seeing the ripple effects helps clarify how all the pieces relate to each other. Mimicking computational thinking can go a long way toward modeling a living system. It opens up an entire universe of possibilities.